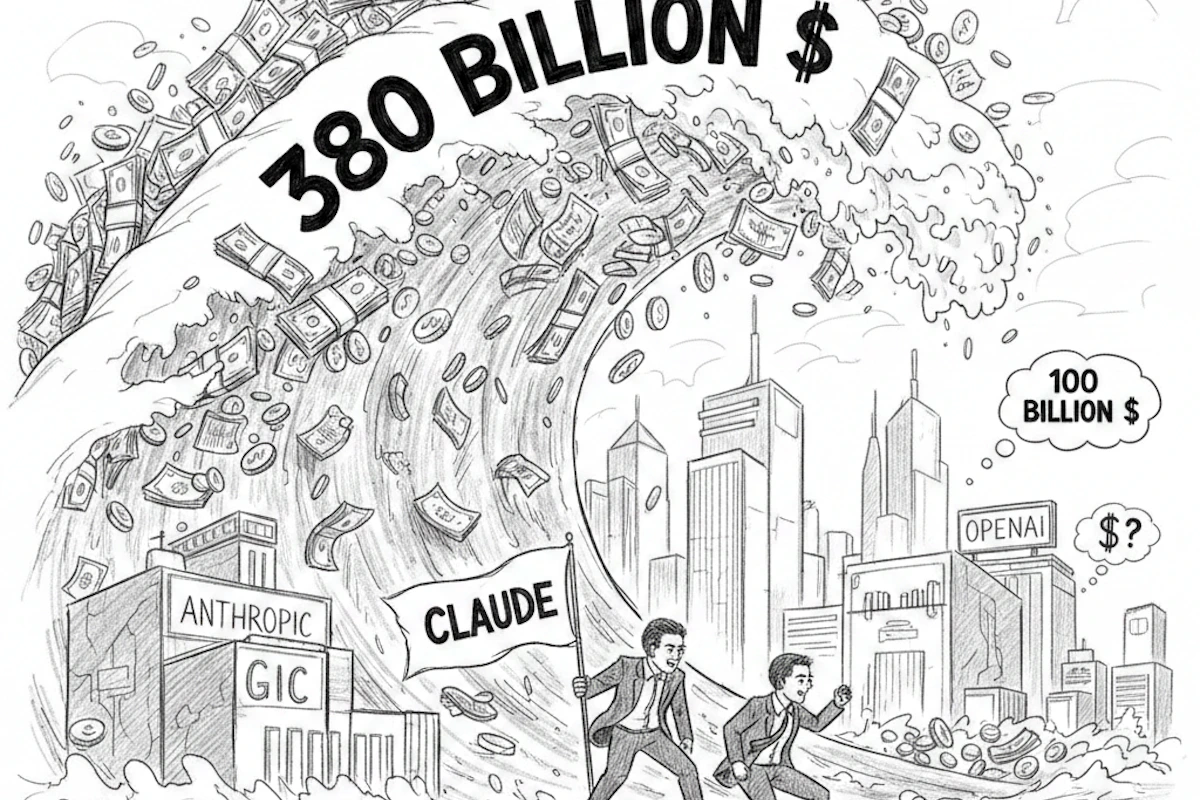

In a dramatic turnaround that reshapes the alliances between Silicon Valley and Washington, the Trump administration has made its move: Anthropic is out, and OpenAI is in. This shift, justified by national security imperatives, raises urgent questions regarding AI ethics and mass surveillance.

The ousting: Anthropic labelled a “Risk”

Following the collapse of negotiations with the Pentagon, the axe has fallen. Donald Trump has ordered the total removal of Anthropic’s technology from all federal agencies within six months. The justification is blunt: the company is now officially classified as a “supply chain risk.”

While the technical specifics of this “risk” remain opaque, observers view it as a direct sanction following Anthropic’s rigid stance on safety principles, specifically its refusal to compromise protocols for offensive military applications.

OpenAI: A Lightning deal in the shadows

OpenAI wasted no time. In the immediate wake of Anthropic’s exclusion, Sam Altman’s firm secured a “lightning deal” with the Department of Defense (DoD). The objective: to deploy its models within highly classified environments.

The “Red Line” controversy

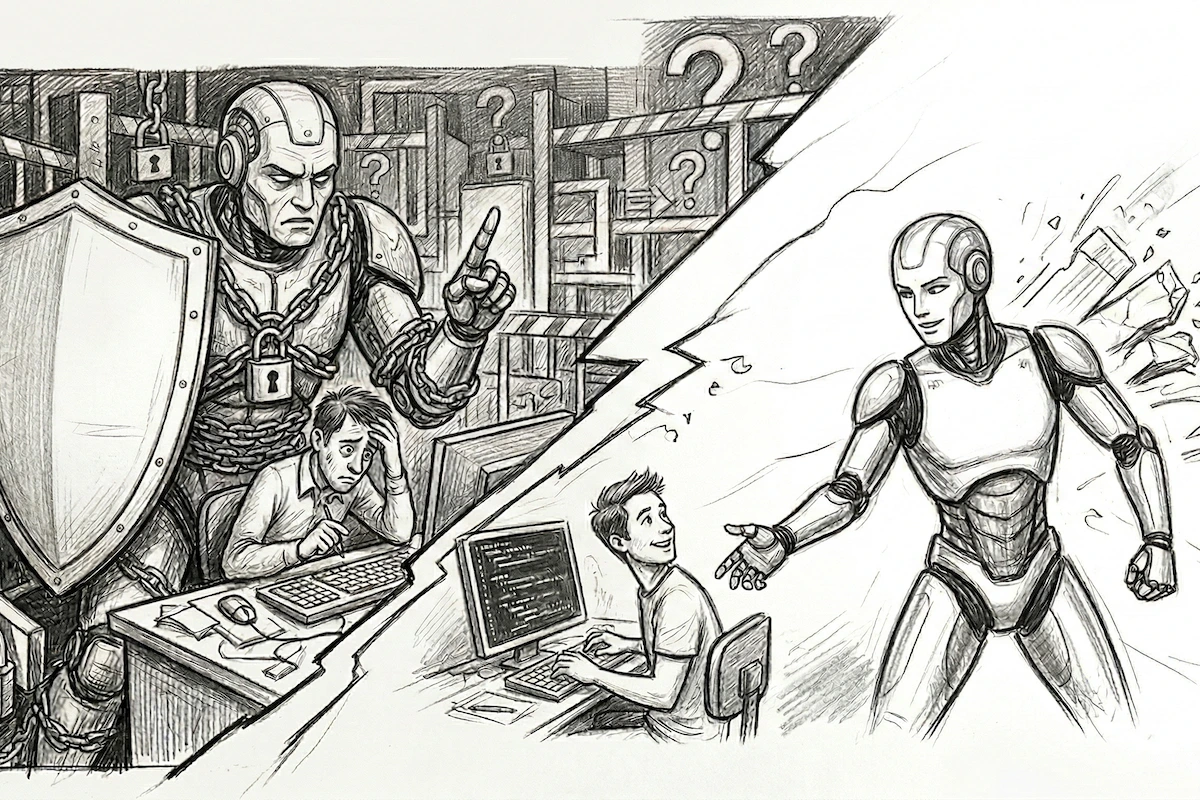

This partnership has sparked widespread scepticism. Why did OpenAI succeed where Anthropic failed?

- Anthropic’s stance: A categorical refusal to participate in mass surveillance or the development of autonomous lethal weapons.

- OpenAI’s position: The company claims to maintain the exact same ethical boundaries, a statement that many industry experts find difficult to swallow given the unprecedented speed of the contract’s signing.

Related: GPT-5.2 or Grok-4: Which is the superior AI?

Technical architecture vs. contractual clauses

In its defence, OpenAI presents a structural argument. The company maintains that safety does not rely solely on written promises, but on an exclusive cloud-based architecture.

“Deployment via our servers prevents the direct integration of AI into physical weapons systems,” OpenAI leadership insists.

In other words, as long as they maintain control over the “cloud switch,” the AI theoretically cannot be repurposed as the autonomous brain of a lethal drone.

The shadow of domestic surveillance

Despite these assurances, the deal remains alarming for civil liberties. Renowned experts, including Mike Masnick, are sounding the alarm. The point of friction? Executive Order 12333. This legal loophole allows for data interception under the guise of foreign intelligence but could be exploited to bypass protections against domestic surveillance.

By integrating with the DoD, OpenAI could, willingly or otherwise, become a critical cog in the state surveillance machine.

Sam Altman’s “Risky Bet”

Aware of the potentially disastrous impact on his company’s brand, Sam Altman has admitted the deal was “rushed.” The OpenAI CEO is playing a complex game:

- Defusing tensions between the government and the AI industry.

- Avoiding Anthropic’s fate, even at the cost of an immediate and severe media backlash.

It is a high-stakes balancing act. By opting for close collaboration with the Trump administration, OpenAI secures contractual dominance but risks sacrificing its perceived ethical neutrality.

Source: OpenAI Blog / Bsky.app (Mike Masnick)