The history of Artificial Intelligence is more than a timeline of silicon chips and lines of code; it is a Promethean quest driven by the vision of pioneers and the dedication of those, like the Free AI Online team, who are deeply passionate about its potential.

It represents the collision of philosophy, mathematics, biology, and engineering, a narrative of humanity’s attempt to decode the secret of its own consciousness to replicate it in the machine, and as we revisit the milestones of this technology, it becomes clear that we are witnessing a force that is truly set to change the world.

I. Philosophical roots and formal logic (Antiquity – 1940)

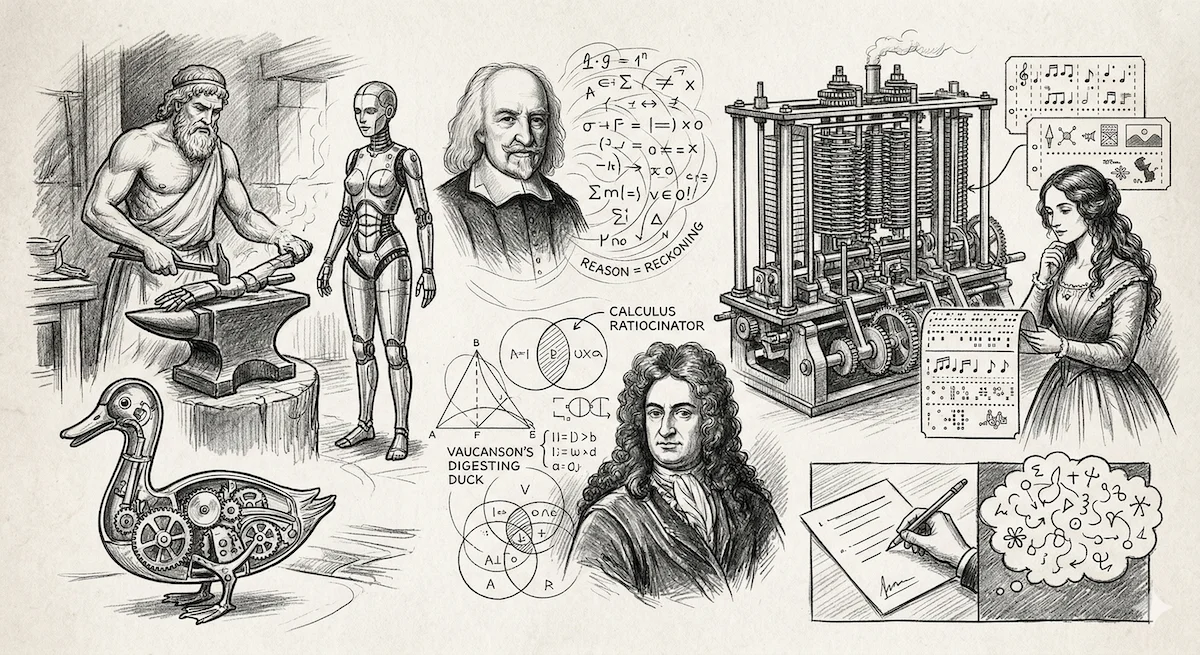

Long before electricity, the human mind conceptualized artificial beings. From the golden handmaidens of Hephaestus in Greek mythology to the 18th-century automatons like Vaucanson’s Digesting Duck, the desire to mimic the form of life is ancient.

However, the intellectual blueprint for AI lies in the formalization of thought.

In the 17th century, Thomas Hobbes boldly claimed that “reason is nothing but reckoning” (calculation).

This suggested that if thought follows logical rules, a machine could eventually think. Gottfried Wilhelm Leibniz later envisioned the Calculus Ratiocinator, a universal symbolic language that would allow humans to settle any logical dispute through calculation.

The 19th century provided the first mechanical hardware.

Charles Babbage designed the “Analytical Engine,” the first general-purpose mechanical computer. His collaborator, Ada Lovelace, became the world’s first programmer. She famously intuited that the machine could process more than just numbers, it could manipulate symbols, musical notes, or images.

However, she also issued a famous caveat: “The machine has no pretensions to originate anything. It can do whatever we know how to order it to perform.” This debate over “machine creativity” remains at the heart of AI today.

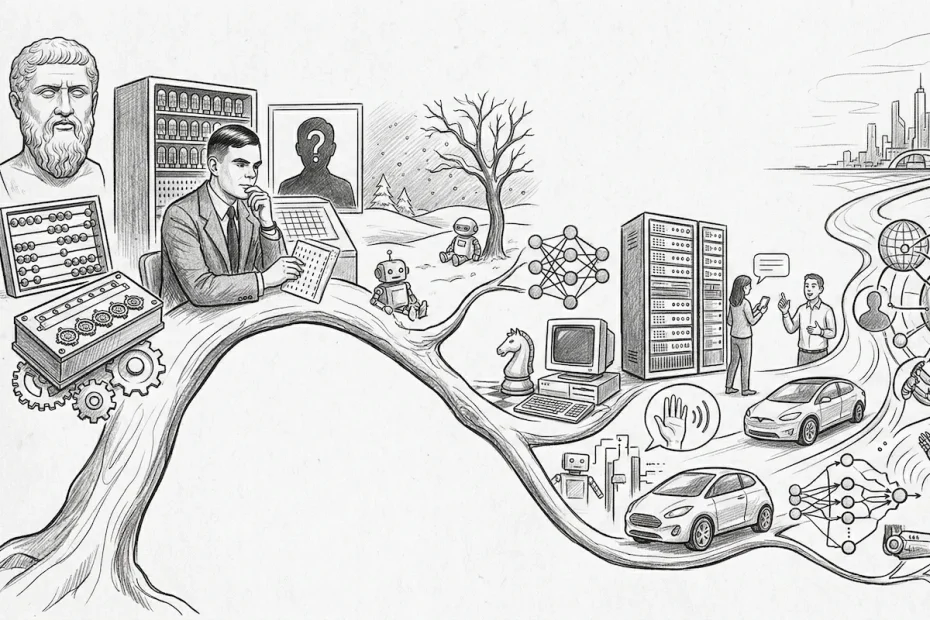

II. The spark: Cybernetics and the Turing era (1940 – 1956)

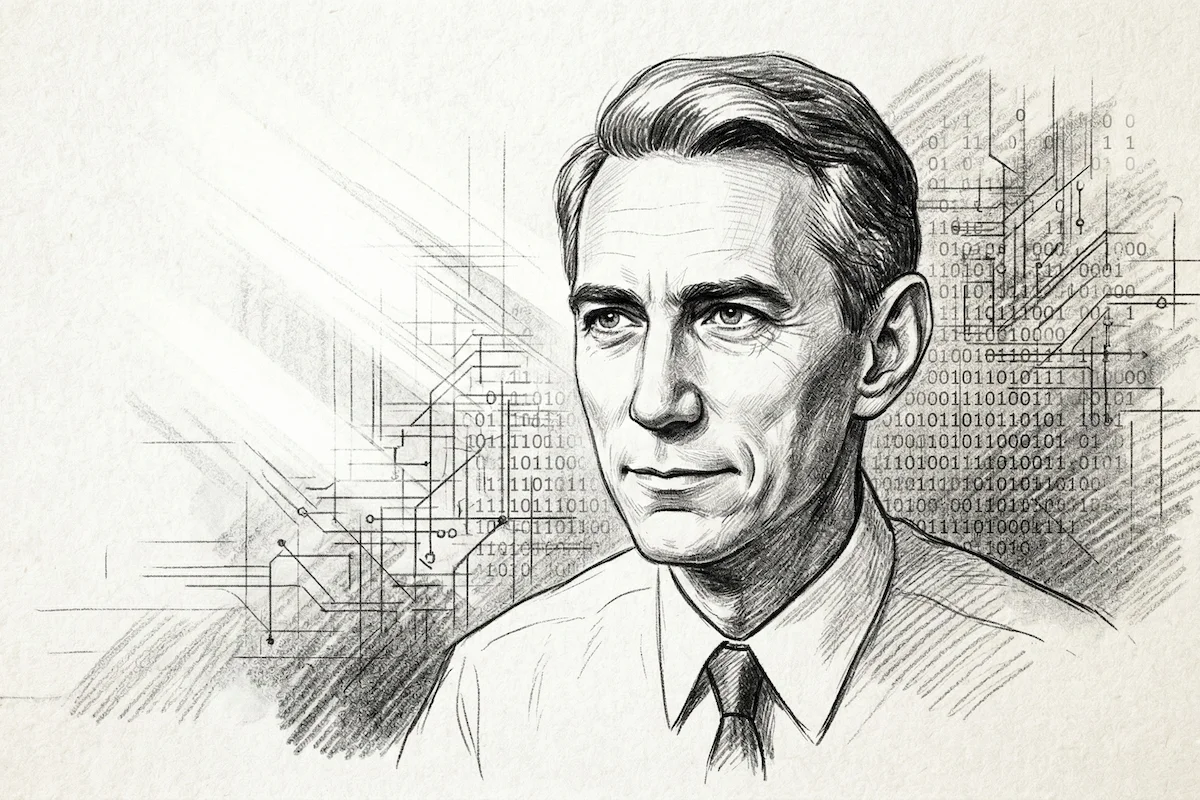

World War II acted as a massive technological catalyst. To break the Enigma code, Alan Turing developed fundamental concepts of computability. In 1950, he published Computing Machinery and Intelligence, where he bypassed the metaphysical question “Can machines think?” and replaced it with a pragmatic experiment: the Imitation Game (now known as the Turing Test).

Simultaneously, the field of Cybernetics, led by Norbert Wiener, began studying control and communication in living beings and machines. In 1943, Warren McCulloch and Walter Pitts published a landmark paper showing that simple artificial neural networks could compute any logical function. This was the birth of Connectionism, even though the era’s computing power was non-existent.

III. The golden age and symbolic AI (1956 – 1974)

In 1956, the Dartmouth Conference officially birthed the field. John McCarthy (who coined the term “Artificial Intelligence”), Marvin Minsky, Herbert Simon, and Allen Newell gathered with a radical premise: that every aspect of learning or intelligence could be so precisely described that a machine could be built to simulate it.

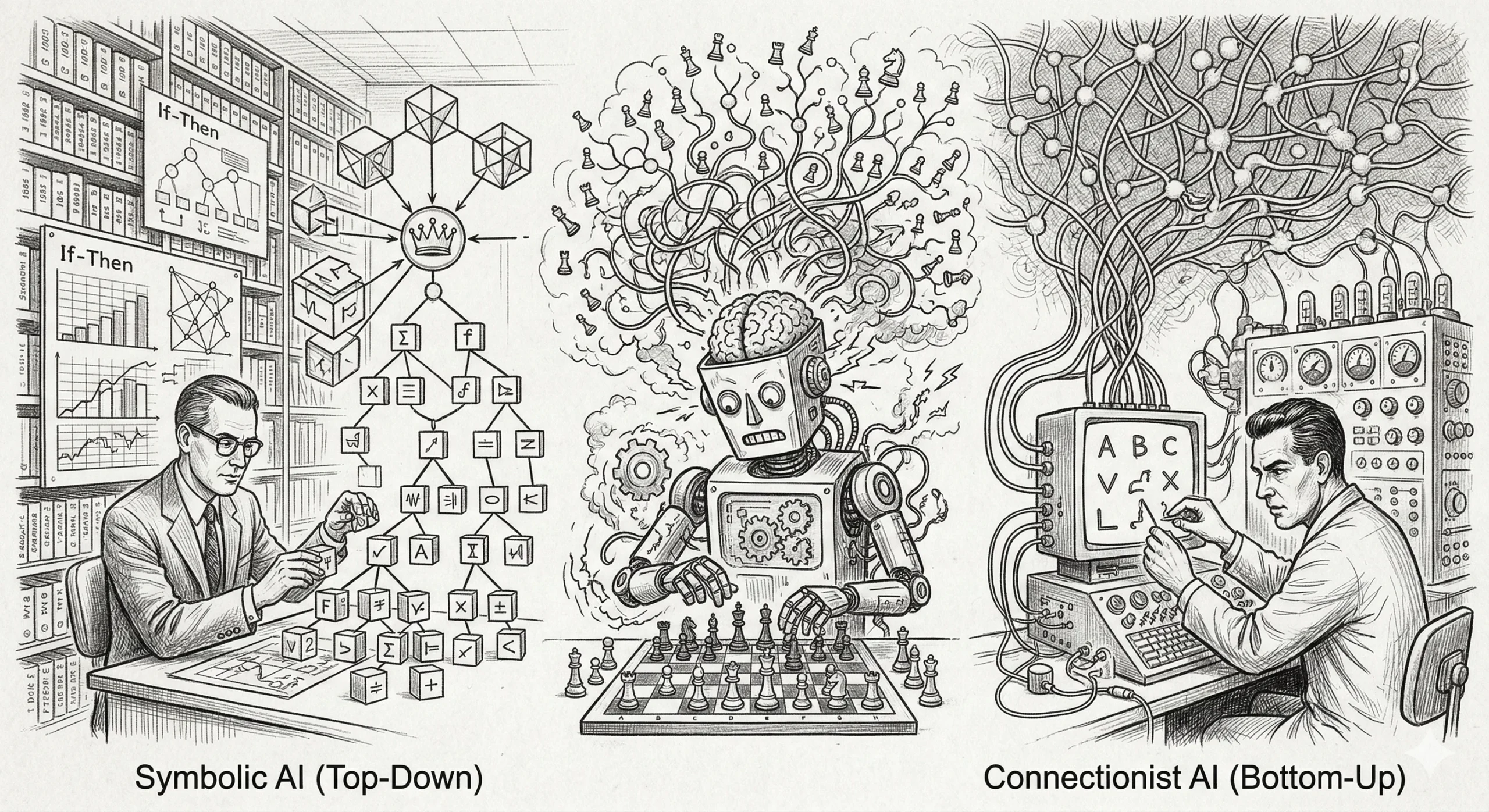

Two competing schools of thought emerged:

- Symbolic AI (Top-Down): The belief that intelligence comes from manipulating explicit logical rules (If-Then statements).

- Connectionist AI (Bottom-Up): The attempt to mimic the biological brain. In 1958, Frank Rosenblatt created the Perceptron, the ancestor of modern neural networks, which could “learn” to recognize simple shapes.

Optimism was sky-high. Researchers predicted that a machine would be the world chess champion by 1970. However, they soon hit a wall: “combinatorial explosion.” As problems became slightly more complex, the number of possible solutions grew so large that computers of the time simply couldn’t handle them.

IV. The first “AI Winter” and expert systems (1974 – 1997)

By the mid-70s, the hype collapsed. The Lighthill Report in the UK and criticism from the US government led to a massive withdrawal of funding. This was the first “AI Winter.”

AI returned in the 1980s through a pragmatic approach: Expert Systems. Instead of trying to create a general intelligence, developers built software specialized in niche fields (medical diagnosis, mineral exploration). These systems “memorized” thousands of rules provided by human experts. Japan’s “Fifth Generation” project also poured billions into making AI the future of computing.

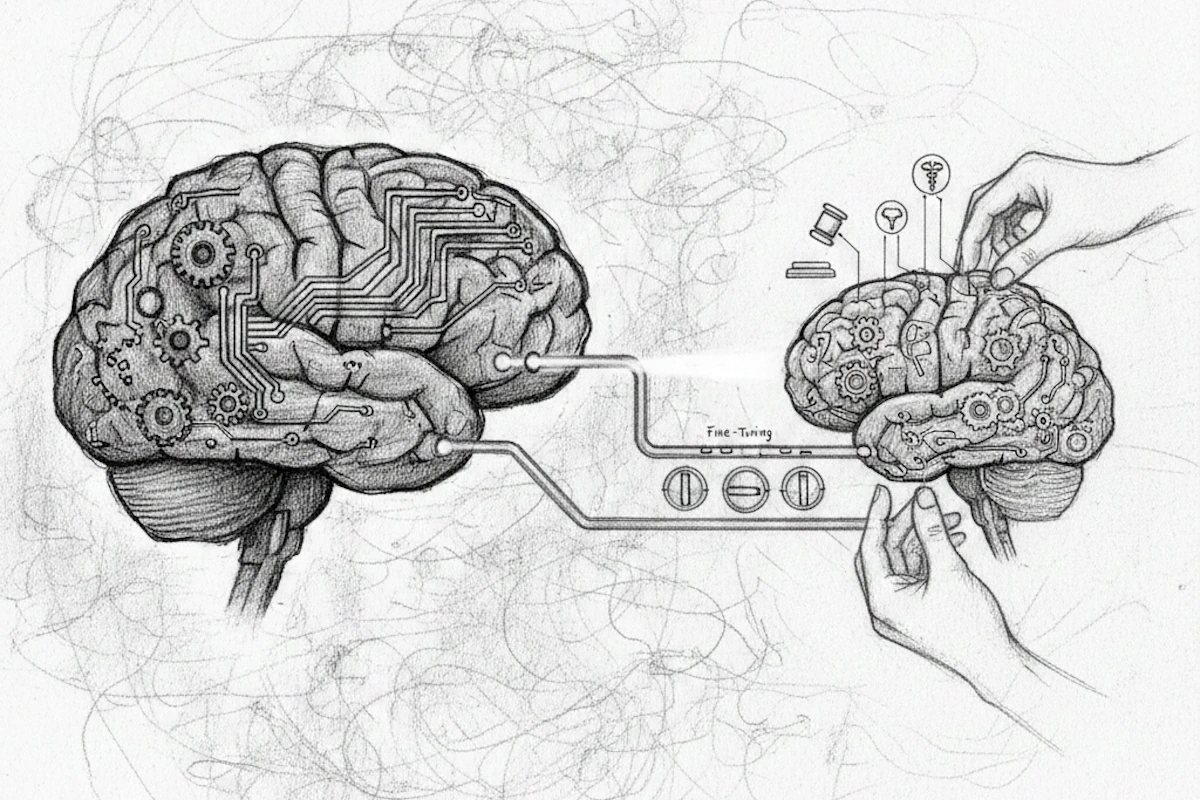

However, Expert Systems were brittle; they couldn’t handle uncertainty and were expensive to update. This led to the second AI Winter (1987–1993). Yet, in the shadows, pioneers like Geoffrey Hinton, Yann LeCun, and Yoshua Bengio were perfecting backpropagation, a method that allowed multi-layered neural networks to learn from their errors.

Related: Writing: A free AI that writes your entire book, with no limits

V. Big Data and the Deep Learning Big Bang (1997 – 2017)

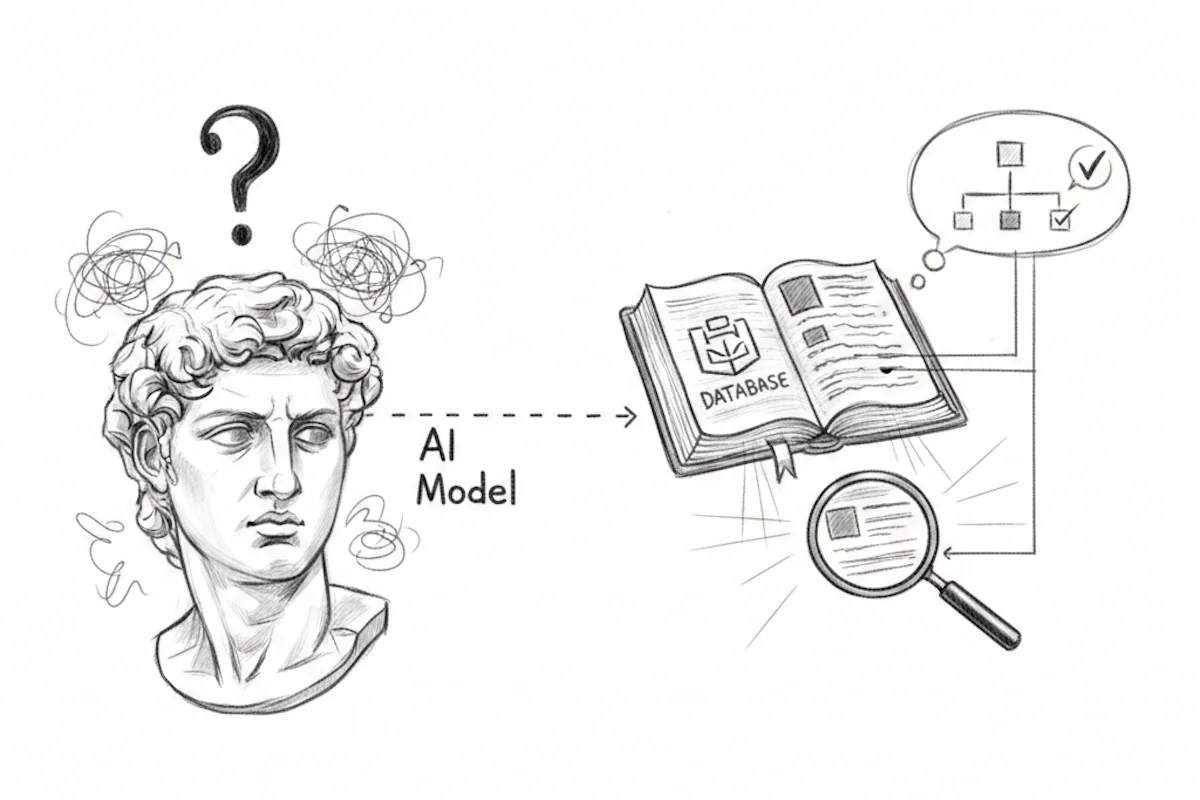

At the turn of the millennium, AI shifted from a logic-based discipline to a statistical one. The goal was no longer to tell the machine the rules of the world, but to let the machine infer probabilities from massive amounts of data.

- 1997: IBM’s Deep Blue defeated Garry Kasparov at chess, a victory for “brute force” computing.

- 2011: IBM’s Watson won Jeopardy!, proving AI could navigate the nuances of human language and puns.

- 2012: The AlexNet moment. In a computer vision competition (ImageNet), a deep neural network smashed all records.

Three pillars aligned: Big Data (the internet provided the training material), GPUs (NVIDIA’s chips allowed massive parallel processing), and Deep Learning algorithms. AI was no longer just logical; it was becoming intuitive.

In 2016, DeepMind’s AlphaGo defeated world champion Lee Sedol in Go, a game previously thought to be too complex for machines.

VI. The era of Generative AI and Transformers (2017 – Present)

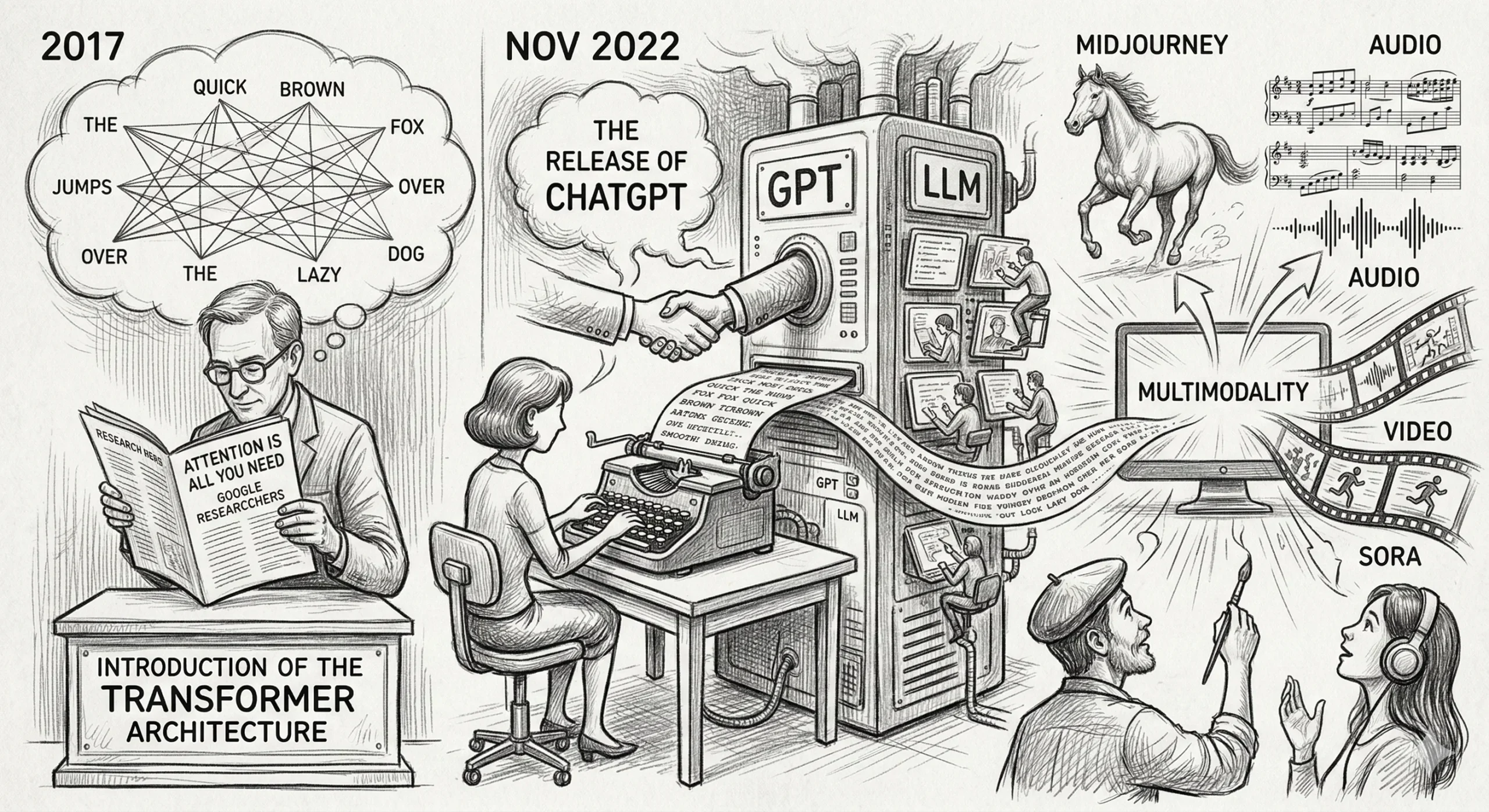

In 2017, Google researchers published “Attention Is All You Need”, introducing the Transformer architecture. This allowed AI to process sequences of data (like text) by “attending” to the most important parts of a sentence regardless of their position.

This led to the rise of Large Language Models (LLMs):

- GPT (Generative Pre-trained Transformer): Developed by OpenAI, these models learn by predicting the next word in a sequence across billions of pages of text.

- November 2022: The release of ChatGPT changed everything. For the first time, AI was not a hidden tool but a conversational interface accessible to everyone.

- Multimodality: AI moved beyond text to generate high-fidelity images (Midjourney), audio, and even video (Sora).

VII. The future: Towards AGI?

We are now in an era where AI is an essential social infrastructure. The focus has shifted from “Can we do it?” to “How do we align it with human values?”

The ultimate goal for many is AGI (Artificial General Intelligence), a machine capable of performing any intellectual task a human can do. This raises profound questions: Is consciousness an emergent property of computation? How do we ensure safety in a world where machines might out-think their creators?

The history of AI has moved from the rigid logic of the 1950s to the fluid, creative probabilities of the 2020s.

It is no longer just a branch of computer science; it is the new substrate upon which humanity will build its future.

Pingback: Anthropic: Why is the AI named Claude?