In the rapidly evolving world of Artificial Intelligence, Large Language Models (LLMs) like Claude, Grok or GPT-5 have often been compared to brilliant scholars with a slight problem: they have a “knowledge cutoff.” They know everything about the world up until their training ended, but they are completely oblivious to what happened this morning, or what is inside your company’s private servers.

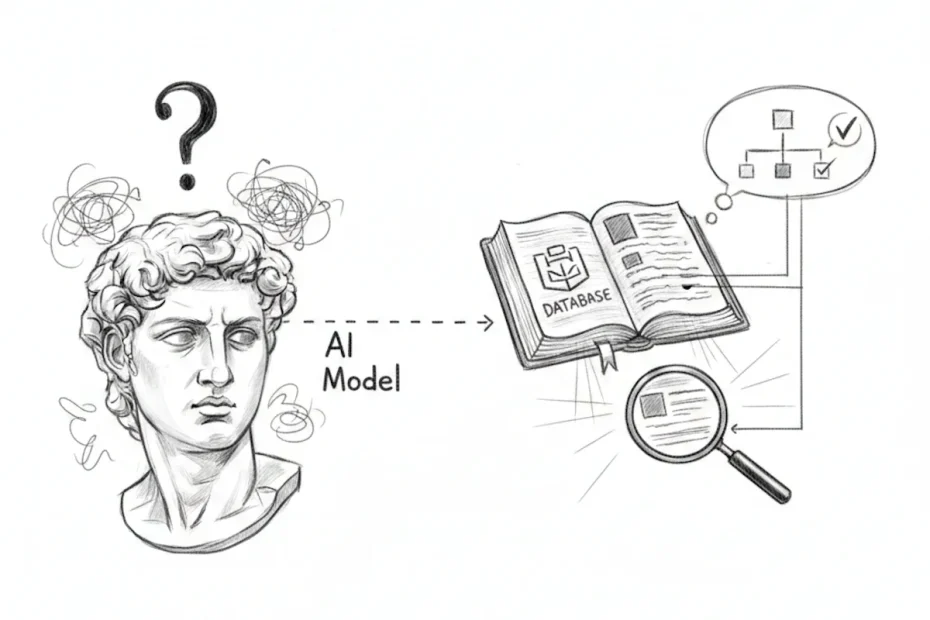

This is where Retrieval-Augmented Generation (RAG) comes in. It is the breakthrough architecture that allows an AI to consult a specific, private, or up-to-date library of information before it speaks. In short, RAG gives the AI a set of “eyes” to read your data in real-time.

The problem: Why static AI isn’t enough

To understand RAG, we first need to look at the two major hurdles of standard LLMs:

- Hallucinations: When an AI doesn’t know the answer to a specific question (like “What are our Q3 shipping protocols?”), it often tries to be helpful by “predicting” a plausible-sounding but entirely fake answer.

- Stale knowledge: Training a foundation model costs millions of dollars and takes months. You cannot retrain a model every time you update a PDF or a price list.

RAG shifts the paradigm. Instead of relying on the AI’s memory, we treat the AI as a world-class reasoning engine and provide it with the relevant “textbooks” it needs to solve a specific task.

How RAG works: The three pillars

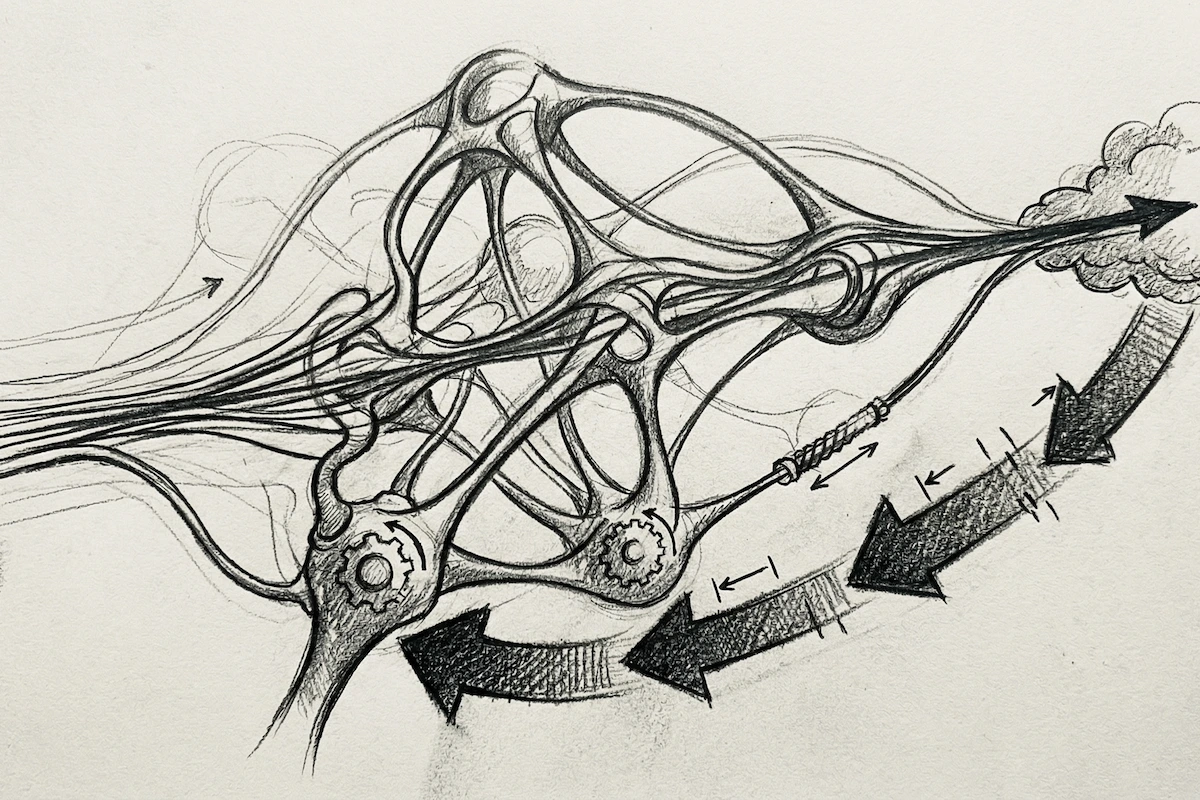

The RAG process doesn’t change the “brain” of the AI; it changes the “input.” It follows a sophisticated three-step dance:

1. Retrieval: Finding the needle in the haystack

When a user asks a question, the system doesn’t go straight to the AI. First, it searches your own database. Using a technology called Vector Search, the system looks for the most semantically relevant snippets of information. It doesn’t just look for keywords; it understands the intent behind the query.

2. Augmentation: Adding context

Once the system finds the right paragraphs or data points, it “augments” the user’s prompt. It wraps the original question with the retrieved facts. For example: “Using only the following technical manual excerpts, answer this user’s question about the engine turbine.”

3. Generation: The final answer

The LLM receives the question and the “cheat sheet” of facts. It then uses its natural language skills to draft a response that is accurate, fluent, and, most importantly, anchored in the provided data.

Related: Art of prompt engineering: The ultimate guide to the R.C.T.F. framework

Why RAG is a game-changer for businesses

For any AI platform, RAG is the bridge between “cool tech” and “business-critical tool.” Here is why it is becoming the industry standard:

- Verifiable accuracy: One of the best features of RAG is its ability to provide citations. The AI can say, “According to Section 4 of the Safety Handbook…” This transparency eliminates the “black box” feel of AI.

- Cost-efficiency: Re-training or “Fine-Tuning” a model is expensive and technical. RAG is much more affordable because you are simply managing a database, not teaching a trillion-parameter model new tricks.

- Data security: With RAG, your sensitive data stays in your secure database. It is only retrieved when needed and is never “absorbed” into the public AI model’s permanent memory.

- Real-Time updates: Did your company policy change ten minutes ago? Just update the document in your library. The RAG system will use the new version for the very next query.

RAG vs. Fine-Tuning: Which one do you need?

A common question is whether it’s better to fine-tune a model or use RAG. Think of it this way:

- Fine-Tuning is like a student going to medical school for years to learn a specific “vibe” or specialized jargon.

- RAG is like giving that student an open-book exam with the most recent medical journals on their desk.

For most business applications where facts, figures, and updated documents are key, RAG is the winner.

The future of contextual intelligence

The era of generic AI is fading. The future belongs to “Contextual AI”, systems that understand your specific business, your specific customers, and your specific data. RAG is the engine making that possible.

By grounding the creative power of LLMs in the solid reality of your data, RAG transforms a chatbot into a reliable, expert collaborator.

To dive deeper into the mechanics of modern technology, explore our Free AI Online platform. You can find a wealth of other articles covering the core principles and emerging concepts of artificial intelligence in our comprehensive AI Glossary. Whether you are looking to master technical architectures or understand ethical implications, we provide the insights you need to stay ahead.

I red your descriptions, your writing style is simple to understand. It is most important thing for understanding new technology or subject. Really apriciate