If you are a regular user of cutting-edge models like GPT-5 or Grok on Free AI Online, you have likely experienced a baffling moment: the AI gives you an answer with absolute confidence and perfect grammar, but the facts are entirely made up. In research circles, this is known as an Hallucination.

Understanding why an AI “lies” (without intent) is one of the most vital steps in becoming a power user. After exploring LLMs and the art of the Prompt, let’s dive into the shadows of this technology to understand its limits.

1. What is an AI hallucination?

An AI hallucination occurs when a language model generates an output that appears coherent and persuasive but is not based on any real-world data or historical fact.

The most deceptive aspect of an hallucination is its form. The AI doesn’t hesitate; it doesn’t stutter. It can fabricate a complete biography of a person who never existed or provide precise statistics on an imaginary stock market with the same authoritative tone it would use to give you a pancake recipe.

2. The mechanics of the mirage: Why does AI hallucinate?

To understand hallucinations, we must revisit the fundamental nature of LLMs. As we’ve discussed, AI does not possess a human-like “understanding” of the world. It is a massive statistical prediction engine.

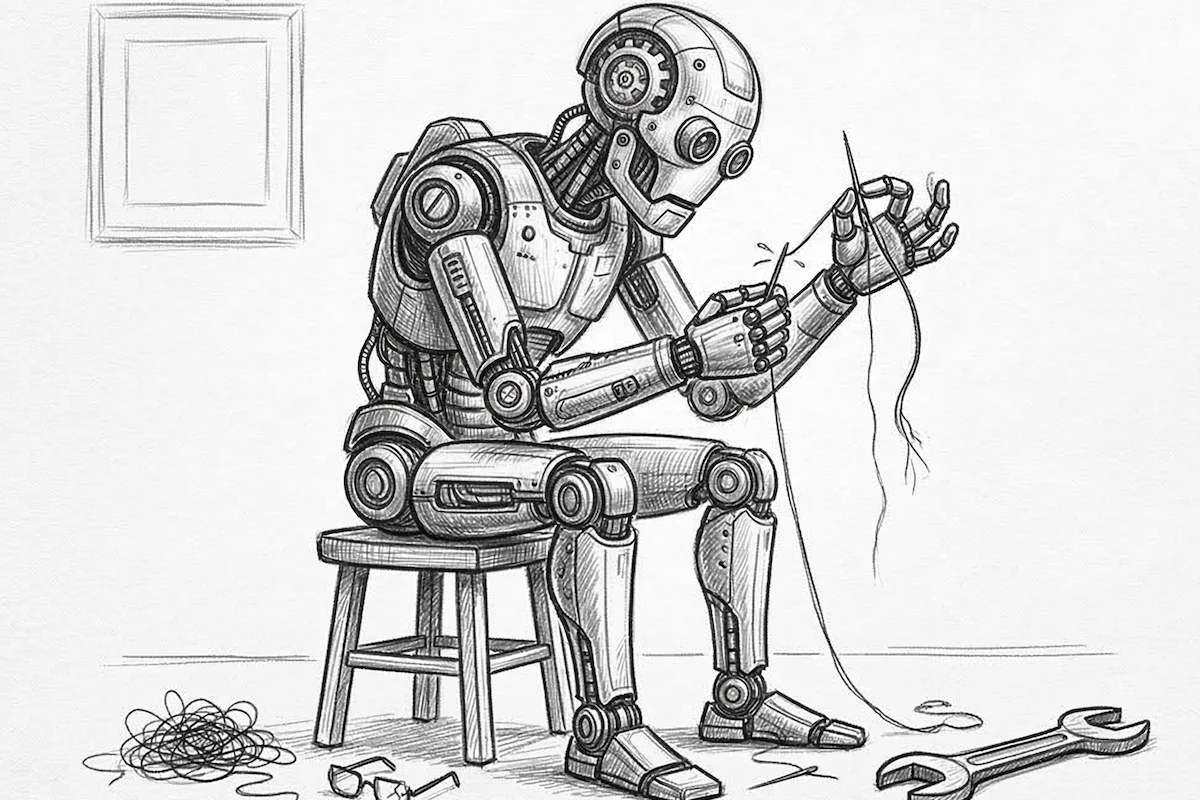

- The probability trap: AI is trained to predict the most likely next word. Sometimes, the most syntactically “logical” sequence of words is not the most factually “true.”

- Horror vacui (Fear of the Void): LLMs are designed to be helpful and to provide answers at all costs. If information is missing from their training data, they don’t always stop. They attempt to fill the gaps by extrapolating from similar patterns they’ve learned. This is a form of “forced creativity” gone wrong.

- Data compression: During training, the AI compresses billions of data points. Much like a human memory that blurs over time, the AI can mix up two similar concepts (for instance, attributing a medical discovery to the wrong researcher because they worked in the same field).

3. Common types of hallucinations

- Factual hallucinations: Errors regarding dates, proper names, locations, or historical events.

- Reasoning hallucinations: The AI has the correct facts but its logical chain is broken (e.g., making a simple mathematical error in the middle of a complex reasoning process).

- Source hallucinations: This is the most dangerous type. The AI invents evidence (fake news articles, fake book titles, fake URL links) to support its claims.

Related: Mastering prompt engineering: 5 pro secrets to get perfect results every time

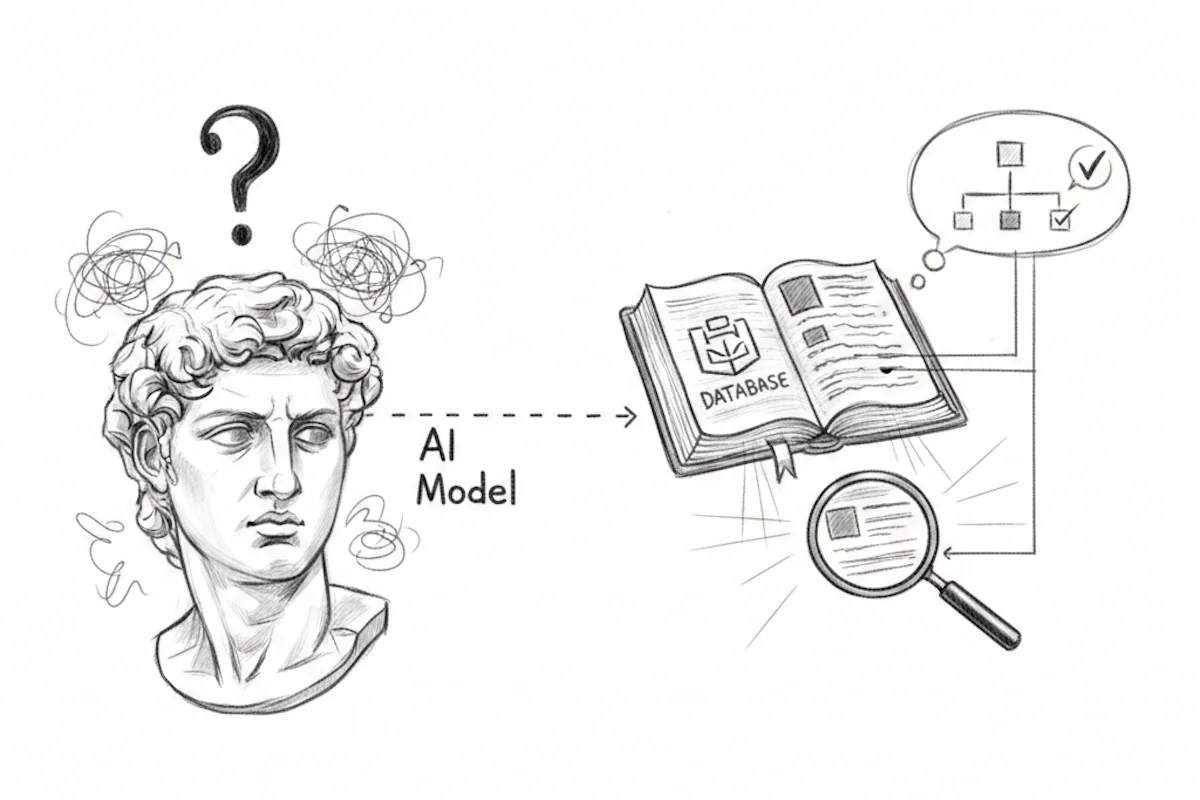

4. How to mitigate hallucination risks

While we cannot yet eliminate hallucinations entirely, we can “tame” them through rigorous Prompt Engineering:

- The exit instruction: Always add a safety clause such as: “If you are not sure of the answer or do not have the information, admit it explicitly instead of making it up.”

- Context framing: Provide the source documents yourself. By telling the AI “Use only this text to answer,” you significantly limit its tendency to pull incorrect information from elsewhere.

- Chain of thought: Ask the AI to detail its reasoning. Often, by writing out the intermediate steps, the AI will realise its own conclusion is flawed.

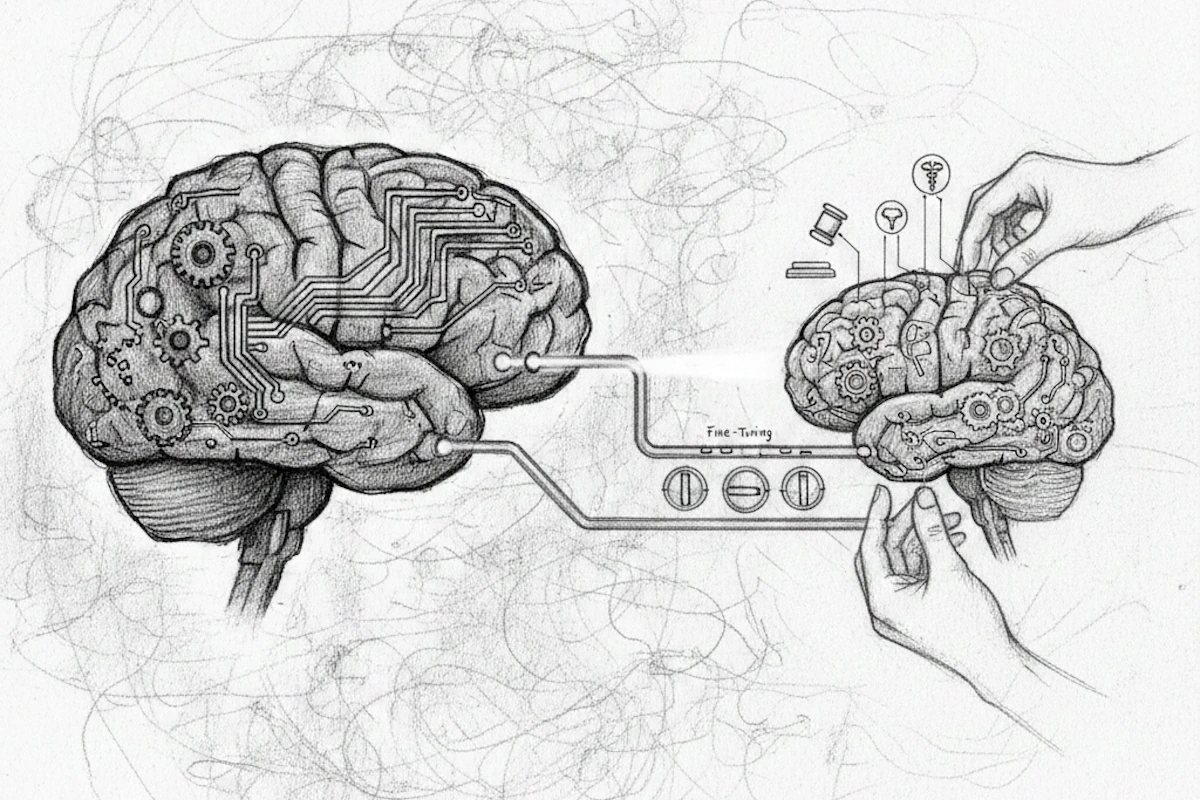

5. The golden rule: AI is an assistant, not an oracle

At Free AI Online, we provide you with the most powerful tools in the world, but they do not replace your judgement. AI excels at structuring, writing, translating, and imagining.

However, for anything related to health, law, or finance, human verification through official sources remains the only guarantee of truth.

Pingback: Understanding Tokens: The fundamental unit of Artificial Intelligence

Pingback: Deep Learning: Definition and explanation

Pingback: How to use Chain of Verification (CoVe) to eliminate AI errors

Pingback: GPT-5: Why AI makes mistakes and how to spot them