In the rapidly evolving landscape of artificial intelligence, the term “Token” is perhaps the most critical concept for any user to master. Whether you are measuring the power of a model like GPT-5 or understanding why a conversation suddenly loses its thread, tokens are the key.

At Free AI Online, we believe that transparency is essential. To get the most out of our tools, you must understand how they “digest” the information you provide.

Here is a deep dive into the world of tokens.

1. What is a Token? Beyond the word

To a human, a sentence is a string of words. To an AI, a sentence is a sequence of tokens. An artificial intelligence does not “read” letters; it processes mathematical representations of text.

A token is a unit of text that the model handles in a single step. It is rarely as simple as “one word equals one token.”

- Common Words: Frequently used words like “apple” or “house” are typically treated as a single token.

- Complex Words: Longer or less common words are broken down into sub-units. For example, “unbelievable” might be split into three tokens: “un”, “believ”, and “able”.

- Characters & Punctuation: Spaces, commas, and exclamation marks are often counted as individual tokens or merged with adjacent characters.

- Code & Emojis: Programming syntax and emojis are highly “token-heavy,” often requiring several tokens to be represented correctly.

The Golden Ratio: In English, a helpful rule of thumb is that 1,000 tokens are approximately equal to 750 words.

2. Why use Tokens instead of words?

You might wonder why AI developers don’t just use standard word counts. The use of tokens offers several technical advantages:

- Mathematical Efficiency: Tokens allow the model to represent a vast vocabulary using a smaller set of numerical IDs, making computation faster.

- Linguistic Intelligence: By breaking words into fragments, the AI can understand the meaning of new words it wasn’t specifically trained on by identifying familiar roots and suffixes.

- Language Universality: Tokenization allows a single model to process multiple languages simultaneously, even those that do not use spaces between words, such as Japanese or Chinese.

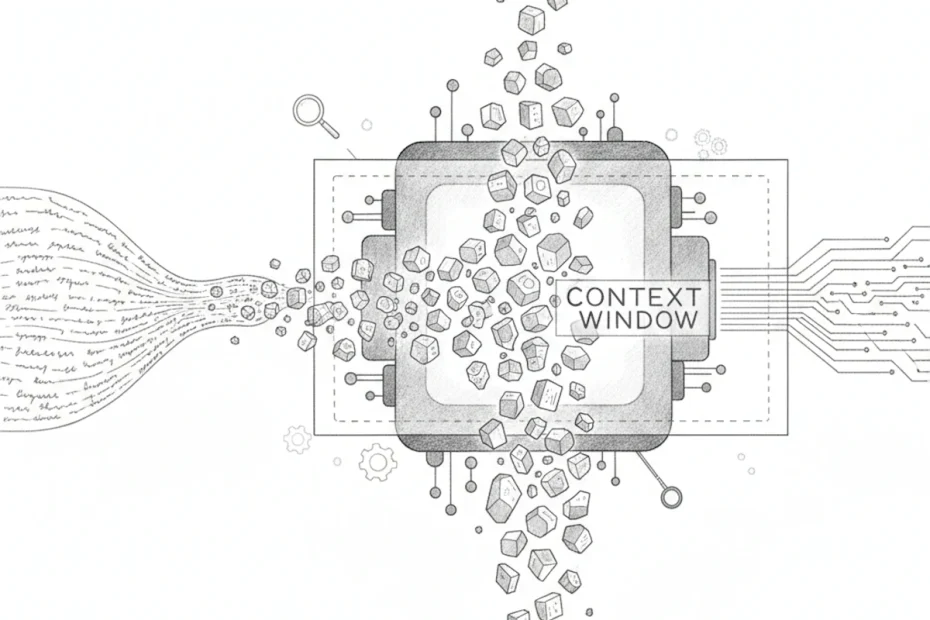

3. The “Context Window”: The limits of memory

The most practical reason to understand tokens is the Context Window. This is the AI’s “short-term memory.” Every model has a fixed capacity for how many tokens it can process at any given moment.

- The limit: If a model has a context window of 128,000 tokens, that includes your current prompt, all previous messages in the chat, and the response the AI is currently writing.

- The “Conveyor Belt” effect: Once this limit is reached, the AI must “forget” the oldest tokens at the beginning of the conversation to make room for new ones.

- The result: This is why, in very long discussions, the AI might eventually lose track of instructions you gave at the very start.

Related: AI Hallucination: When artificial intelligence imagines reality

4. Impact on performance and reasoning

The way an AI uses tokens directly affects the quality of its output:

- Speed: Generating more tokens takes more time. This is why a “concise” request results in a faster response.

- Chain of thought: When you ask an AI to “think step-by-step,” it uses extra tokens to map out its logic. While this consumes more of the context window, it significantly increases the accuracy of complex tasks.

5. Pro-Tips for optimising your token usage

Even though Free AI Online offers unrestricted access, being “token-efficient” will lead to better, sharper results:

- Be direct: Avoid unnecessary “fluff.” A clear, well-structured prompt provides the AI with more “memory room” to focus on the actual task.

- Summarise long inputs: If you are working with a massive document, ask the AI to summarise key points first. This keeps the essential information within the active context window.

- Start fresh for new topics: If you are switching from a coding task to a creative writing task, start a new chat. This clears out the old tokens and gives the AI 100% of its memory for the new goal.

–

To learn more about the world of AI, visit our AI Glossary.

Pingback: Anthropic: Claude 4.6 Sonnet redefines the high-end AI landscape