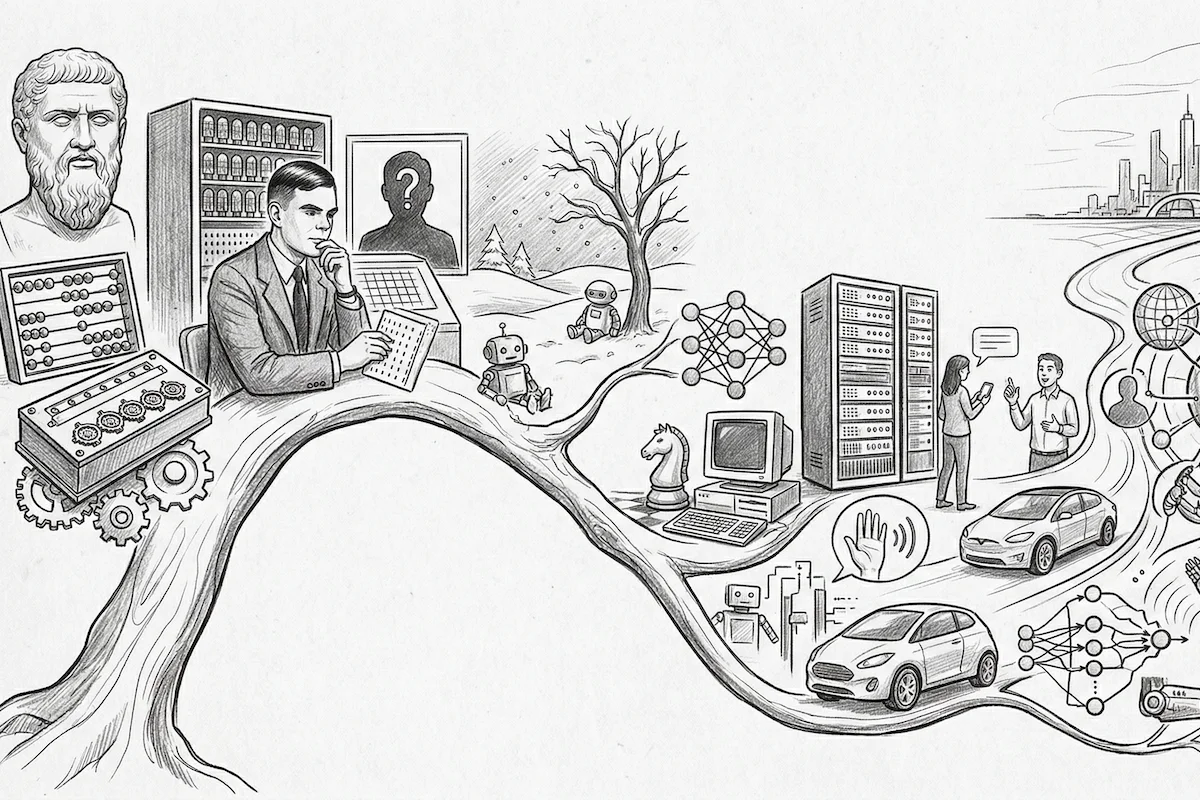

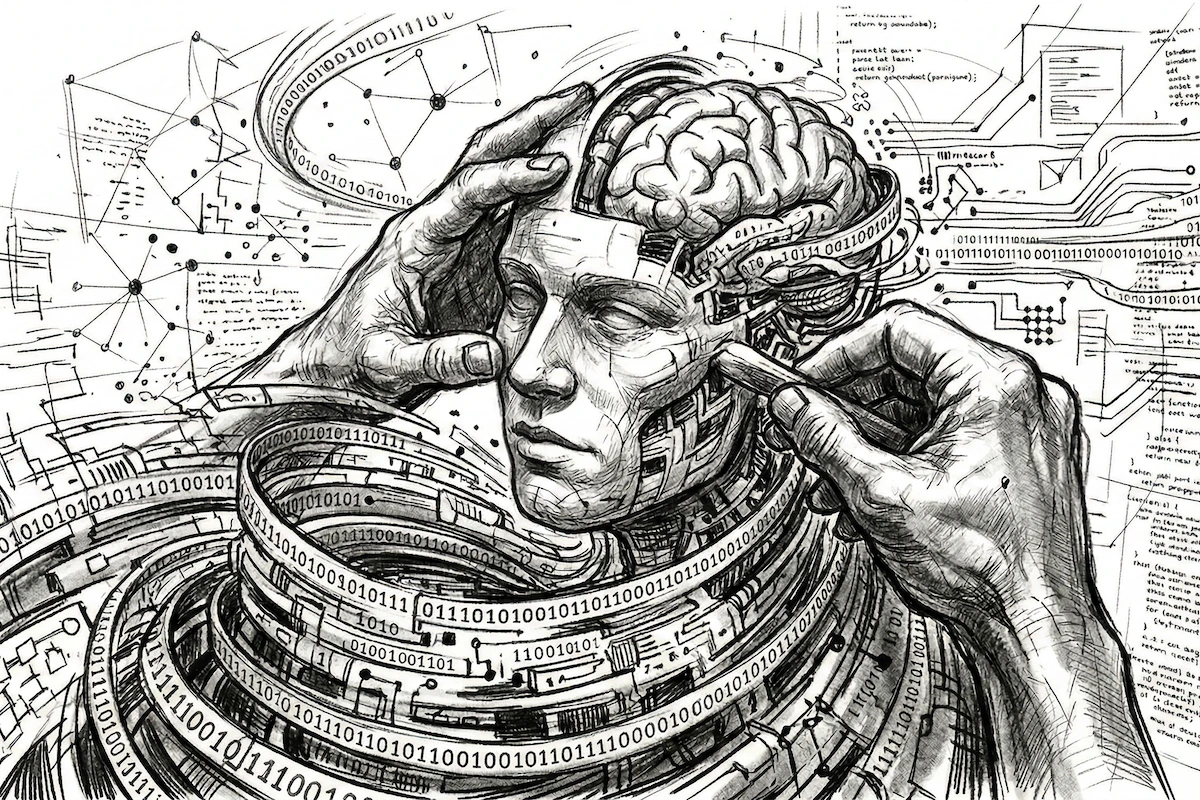

Artificial Intelligence is not a sudden discovery. It is the result of a profound intellectual convergence between ancient philosophy, 19th-century mathematical logic, and 20th-century computer engineering. While platforms like Free AI Online now allow anyone to access this power in a single click, this reality was built upon decades of genius from visionaries who dared to ask: “Can a machine think?”

This is the definitive history of the invention of Artificial Intelligence.

1. Ancient roots: From mythology to logic (Pre-1900)

Long before electricity, the idea of an artificial being endowed with reason existed in the human imagination.

- Antiquity: The Ancient Greeks dreamt of automata, such as Talos, the bronze giant tasked with protecting Crete.

- The 17th century: Philosopher and mathematician Blaise Pascal invented the “Pascaline,” the first mechanical calculator. Shortly after, Gottfried Wilhelm Leibniz envisioned a universal language of thought where all reasoning could be calculated as an arithmetic operation.

- The 19th century: Ada Lovelace, the world’s first computer programmer, wrote algorithms for Charles Babbage’s “Analytical Engine.” She was the first to foresee that machines could do more than mere calculation, processing symbols, music, and text.

Related: AI: A Comprehensive history, from antiquity to AGI

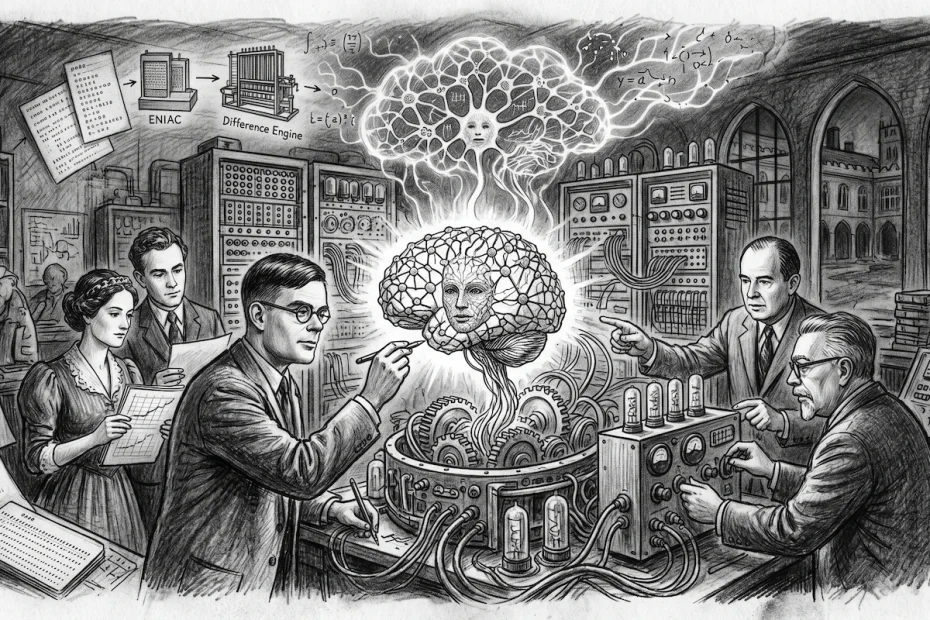

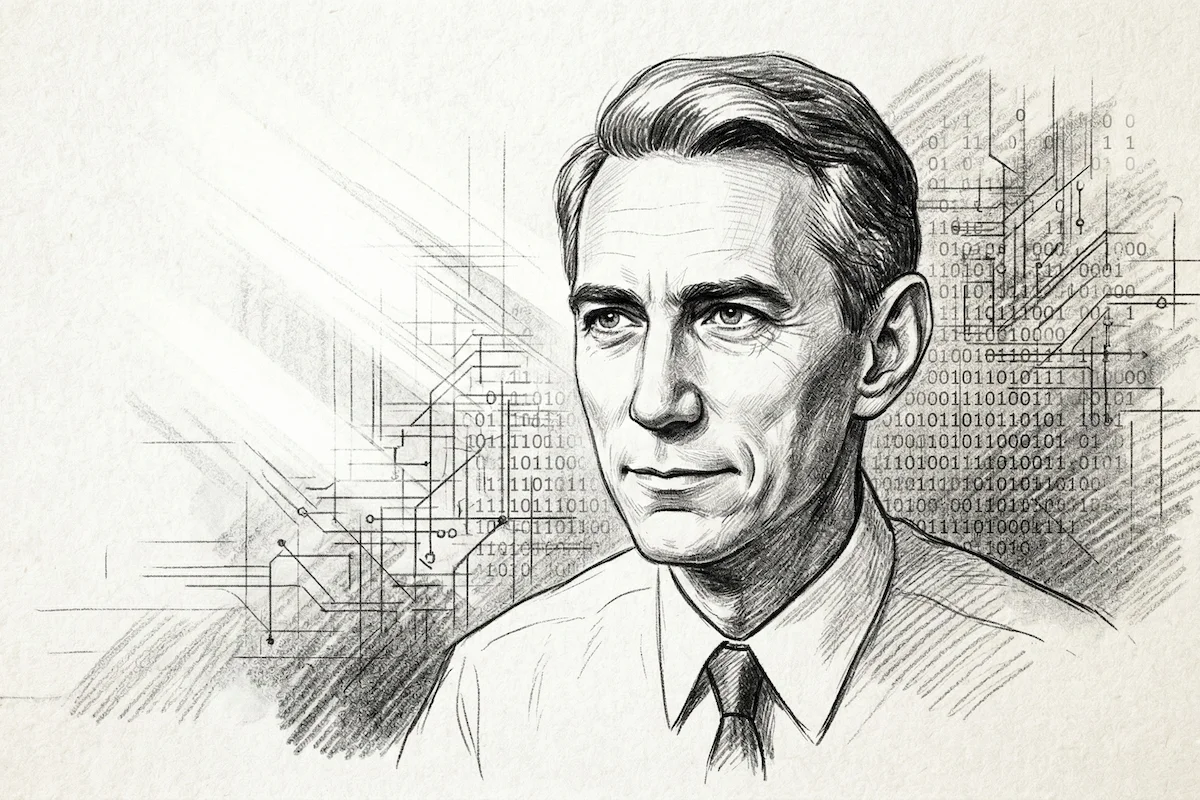

2. Alan Turing and the fundamental question (1936 – 1950)

The true technological leap occurred with Alan Turing. In 1936, he conceived the “Turing Machine,” a theoretical model that defines what a modern computer is.

However, it was in 1950 that Turing changed history with his seminal paper, “Computing Machinery and Intelligence.” In it, he presented the Imitation Game, known today as the Turing Test.

- The concept: If a machine can deceive a human into believing it is also human during a text-based conversation, then the machine can be said to demonstrate “intelligence.”

- The legacy: Turing proved that intelligence does not depend on biology, but on the ability to process information effectively.

3. Summer 1956: The baptism of AI at Dartmouth

While Turing is the conceptual father, the term “Artificial Intelligence” was officially born at Dartmouth College during a two-month summer workshop. This was the moment AI became a formal field of science.

- John McCarthy: Frequently cited as the primary inventor of AI, it was McCarthy who chose the name “Artificial Intelligence” for the Dartmouth project. He wanted a strong term to distinguish the field from “cybernetics.” He later created LISP, the programming language that dominated AI research for 30 years.

- Marvin Minsky: A cognitive science expert, Marvin Minsky was convinced that human intelligence could be broken down into small, simple processes that a computer could replicate. He co-founded the MIT AI Lab.

- Allen Newell and Herbert Simon: At this conference, they presented the Logic Theorist, considered the first-ever AI program. It successfully proved 38 of the 52 mathematical theorems from Russell and Whitehead’s Principia Mathematica.

It features legendary figures who shaped modern computing. Here are the people present in this shot (from left to right):

Trenchard More / John McCarthy (the conference organiser, who famously coined the term “Artificial Intelligence”) / Marvin Minsky (a pioneer of neural networks and robotics) / Oliver Selfridge / Ray Solomonoff / Claude Shannon (the father of information theory) / Nathaniel Rochester (designer of the IBM 701).

4. The Era of “Expert Systems” and the First Chatbot (1960 – 1980)

Following Dartmouth, optimism was at an all-time high. Many predicted AI would surpass human intelligence within a decade.

- ELIZA (1966): Joseph Weizenbaum at MIT created the first conversational agent. ELIZA simulated a psychotherapist. Though rudimentary, it proved that humans were ready to bond emotionally with AI, a phenomenon we still observe today with the tools on Free AI Online.

- Expert systems: In the 1970s, researchers created programs capable of diagnosing diseases or assisting in mineral prospecting using thousands of “If-Then” rules.

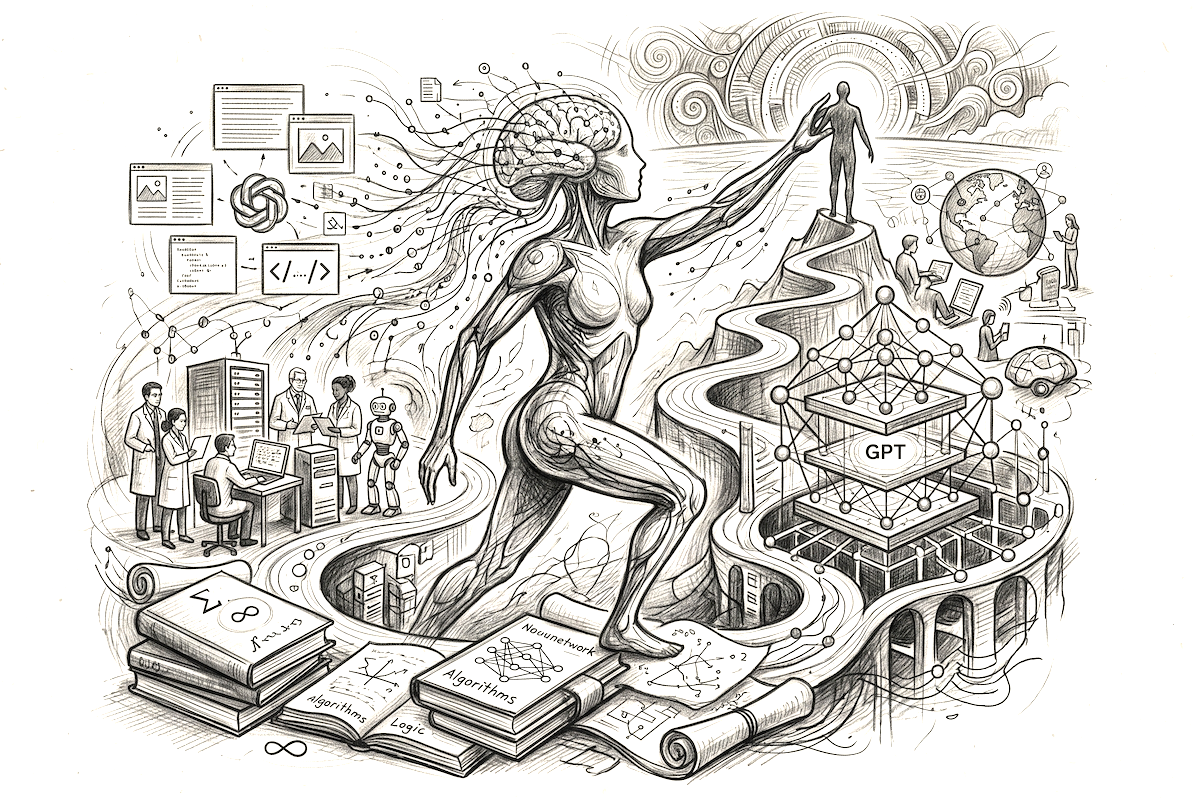

5. The silent revolution: Machine Learning and Neural Networks

For a long time, two visions clashed: AI based on logic (rules written by humans) and AI based on learning (the machine learning by itself).

- Arthur Samuel (1959): He was the first to use the term Machine Learning. He created a checkers program that improved with every game it played.

- Frank Rosenblatt (1958): He invented the Perceptron, the ancestor of artificial neural networks, inspired by the biological functioning of neurons in the human brain.

- The Revival (2000s): After several “AI Winters” (periods of doubt and lack of funding), three researchers breathed new life into the field: Geoffrey Hinton, Yann LeCun, and Yoshua Bengio. They developed Deep Learning. Armed with modern GPU processing power and massive datasets from the internet, their algorithms became capable of recognizing images and understanding language better than ever before.

6. The GPT revolution and 2026: The age of total democratisation

The most dramatic shift in the history of AI occurred not in a lab, but in the hands of the public. While research had been progressing for decades, the world changed forever on 30 November 2022, with the release of ChatGPT by OpenAI.

The “Big Bang” of Generative AI (2022)

Before 2022, AI was often invisible, working behind the scenes in Netflix recommendations or Google searches. ChatGPT changed the paradigm by giving AI a voice. Built on the GPT (Generative Pre-trained Transformer) architecture, it proved that an AI could not only analyze data but also create.

This era was powered by the Transformer architecture, originally invented by Google researchers in 2017 (in the famous paper “Attention Is All You Need“). This allowed models to understand the “context” and “intent” of a sentence globally, rather than just reading words in a sequence. It was the missing piece of the puzzle that Turing and McCarthy had dreamt of.

From laboratory to everyday utility (2023 – 2026)

Following the success of ChatGPT, a global arms race began. Tech giants and open-source communities alike released increasingly powerful models: GPT-5, Claude, Gemini, and Llama.

By 2026, AI has moved beyond simple chat. We have entered the era of Multimodality, where a single AI can see images, hear voices, and write code simultaneously. The “AI Divide” began to close as these technologies became integrated into every aspect of our digital lives, from professional drafting to real-time language translation.

The mission of Free AI Online

It is this long lineage of researchers, from the mathematical genius of Alan Turing to the engineering breakthroughs of the 2020s, that allows Free AI Online to function today.

We are currently living in the democratisation phase. What was once a luxury reserved for NASA supercomputers or elite MIT laboratories is now a utility, as essential as electricity. Our platform’s mission is to ensure that this heritage remains accessible to all. By removing the barriers of “paywalls” and “mandatory registrations,” we allow every student, freelancer, and curious mind to leverage the pinnacle of 70 years of human genius, completely for free.

Who really invented AI?

If we must remember specific names, they are John McCarthy for the name, Alan Turing for the concept, and Geoffrey Hinton for the modern technology. However, AI is ultimately a collective human achievement.

At Free AI Online, we honour this heritage by making these complex tools simple and free for all. Whether you want to draft a professional email, translate a complex document, or simply explore the future of technology, you are using the result of over 70 years of human genius.