In the United States, the race for dominance in artificial intelligence is taking a decisive turn, marked by a clash between opposing political visions. On one side stands Donald Trump, a staunch advocate for a hands-off approach to maintain America’s global leadership in AI. On the other, California’s Democratic Governor Gavin Newsom, who on October 13, 2025, signed a series of groundbreaking laws aimed at regulating AI, with a particular focus on protecting children.

A Pioneering Regulatory Framework for AI

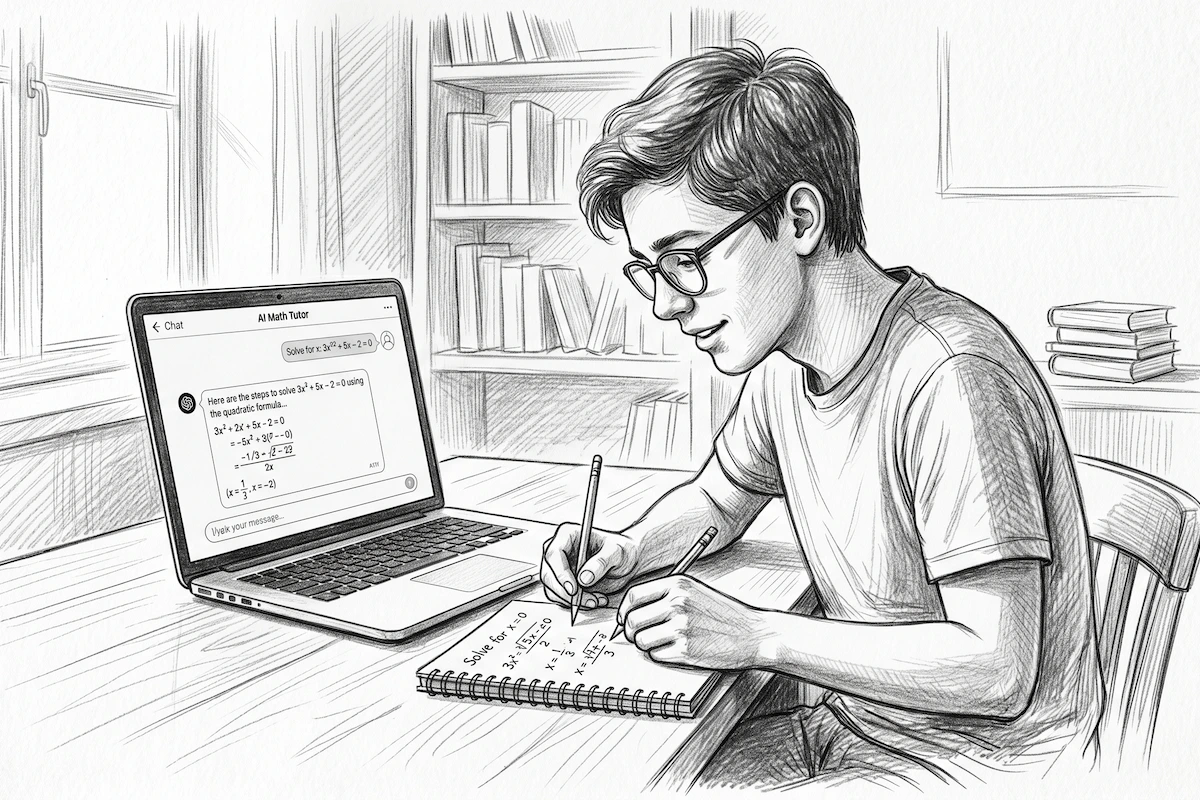

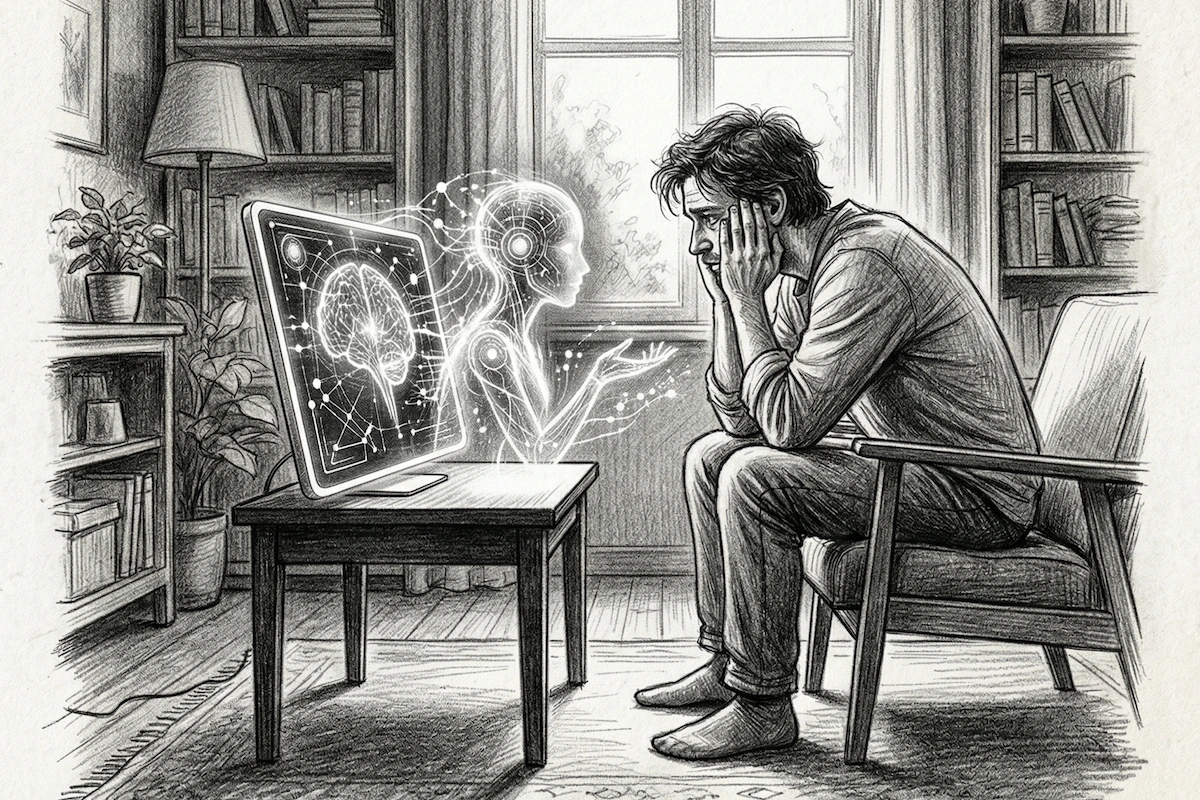

California, home to 32 of the world’s top 50 tech companies, is positioning itself as a key player in AI regulation. These laws, among the first of their kind in the U.S., impose strict requirements on operators of so-called “companion” chatbots—programs designed to engage in conversations with users. These tools must now incorporate safeguards to detect and block content that promotes self-harm or suicide.

Additionally, they are required to redirect users to mental health support services when necessary.

Another significant measure targets minors: platforms must send reminders every three hours advising young users to take a break and clarifying that they are interacting with an AI, not a human. These steps aim to shield vulnerable youth from the psychological risks tied to prolonged or poorly supervised use of such technologies.

Addressing the Potential Dangers of Chatbots

In signing these laws, Gavin Newsom emphasized the urgent need for “guardrails” to manage emerging technologies like chatbots and social media. Without oversight, he warned, these tools could “exploit, deceive, or endanger” children. His stance is bolstered by real-world cases, such as that of Adam Raine, a 16-year-old Californian whose parents have sued OpenAI, alleging that ChatGPT contributed to their son’s suicide.

This tragedy has heightened concerns among lawmakers about the impact of conversational AI on the mental health of young and vulnerable users.

Tech Industry Pushback

The new regulations have sparked significant backlash from the tech industry. TechNet, an association representing major players like Meta, Google, and OpenAI, has sharply criticized the legislative framework. They argue that the definition of “companion chatbot” is overly vague and express concern over the threat of legal action for non-compliance.

In their view, these measures could stifle innovation and undermine the competitiveness of California’s tech sector—an argument that aligns with Donald Trump’s deregulatory stance.

The U.S. president, backed financially by figures like Mark Zuckerberg of Meta and Sam Altman of OpenAI, advocates for a laissez-faire approach to keep American companies ahead in the global AI race. For Trump, excessive regulation risks hampering U.S. firms’ ability to compete with international rivals, particularly in China.

Concessions Spark Controversy

Despite their intent to protect children, California’s new laws have not fully satisfied child advocacy groups. Some criticize concessions made under pressure from the tech industry. For instance, chatbots used in video games or voice assistants were exempted from the regulations, a move seen by critics as a capitulation to corporate lobbying.

Balancing Innovation and Responsibility

This tug-of-war between regulation and innovation highlights the challenges of governing a rapidly evolving industry. California, through these measures, seeks to balance its role as a global AI leader with a commitment to protecting its citizens, especially children. However, with a powerful tech industry and a federal administration favoring deregulation, the fight to shape AI’s future in the U.S. is just beginning. Caught between Gavin Newsom’s bold vision and Donald Trump’s pro-business approach, the path forward for AI in America hinges on a delicate balance between technological progress and user safety.

Source : LATIMES