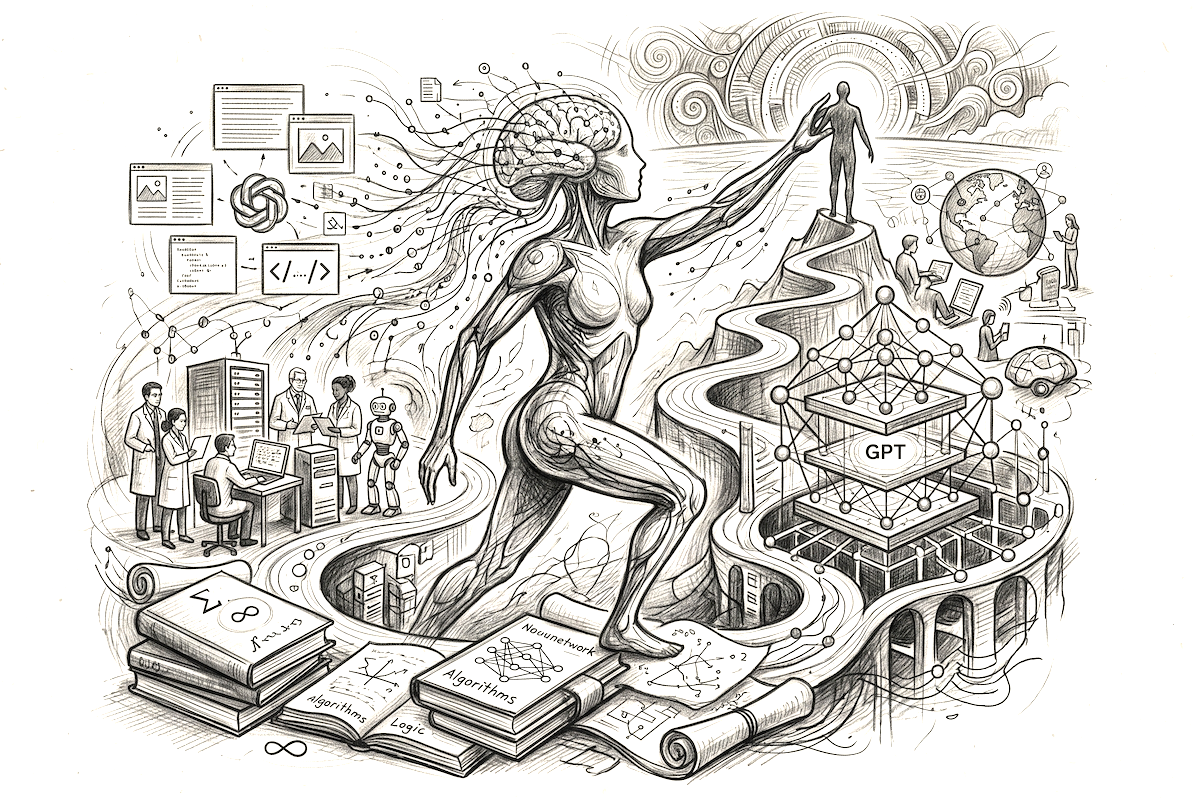

The acronym GPT has become, in just a few short years, the symbol of a major technological disruption. Behind these three letters lies a paradigm shift: we have moved from computing tools manipulated by commands to digital collaborators with whom we dialogue. To truly grasp the magnitude of this revolution, one must look under the hood and examine the complex mechanics of this intelligence.

I. Technical Definition: The Three Pillars of the Acronym

GPT stands for Generative Pre-trained Transformer. Each term describes a crucial stage in the model’s creation.

1. Generative

In the world of artificial intelligence, a distinction is traditionally made between two types of models: discriminative and generative.

- A discriminative model observes data and assigns a label to it (e.g., “This is a photo of a cat”).

- A generative model, like GPT, goes further. Based on its knowledge, it is capable of producing entirely new data that did not exist before. If it has analyzed millions of poems, it will not recite one by heart; it will compose a new one, respecting the rules of rhyme and meter that it has “understood.”

2. Pre-trained

This is where the raw power lies. Before reaching your screen, the model underwent a massive learning phase. Imagine a library containing a vast portion of the global internet: the entirety of Wikipedia, digital libraries, discussion forums (like Reddit), computer code repositories (GitHub), and news archives.

For months, on thousands of high-powered processors, the model “read” this text to learn not just specific facts, but the deep structure of language itself. This “pre-training” allows the model to arrive with a universal cultural and linguistic background before it even receives its first question.

3. Transformer

This was the scientific breakthrough of 2017, originating from a Google research paper titled “Attention Is All You Need.” Before the Transformer, AI models read sentences word by word, in order (recurrent models). The Transformer, however, looks at a sentence as a whole and simultaneously.

Thanks to a mechanism called Attention, it can weigh the importance of each word in relation to others. In the sentence “The animal didn’t cross the street because it was too tired,” the Transformer understands that “it” refers to the animal. If the sentence ends with “because it was too wide,” it instantly understands that “it” refers to the street. This ability to grasp complex context is what makes GPT’s responses feel so human.

Related: ChatGPT Down? Why AI glitches and how to access the best GPTs for free

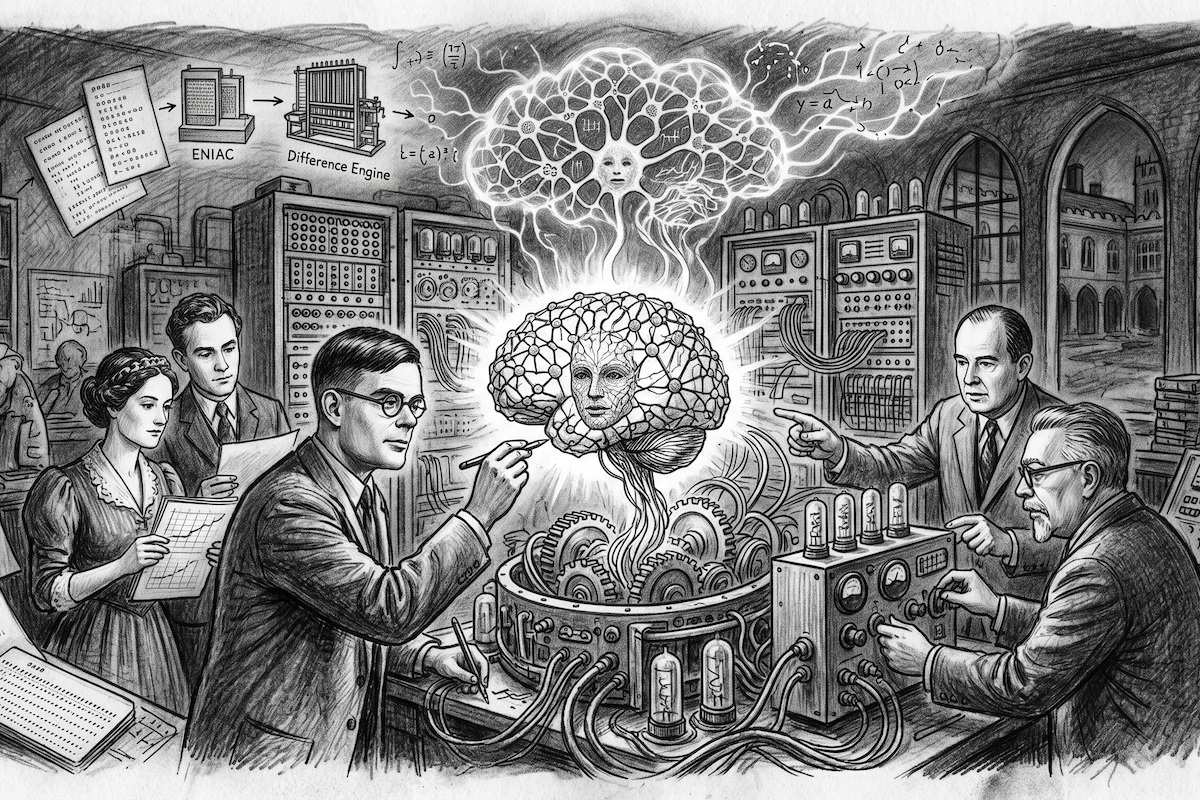

II. A brief history of evolution: From GPT-1 to GPT-5

The story of GPT is one of exponential growth, driven by the company OpenAI.

The awakening (GPT-1 and GPT-2)

In 2018, GPT-1 laid the foundation. It could perform simple tasks, but its memory was short. In 2019, GPT-2 scaled up significantly with 1.5 billion parameters (the model’s internal connections). It became so proficient at generating coherent text that OpenAI initially hesitated to release it, fearing it would be used to create industrial-scale disinformation.

The Explosion (GPT-3 and 3.5)

In 2020, GPT-3 crossed a psychological barrier with 175 billion parameters. It was a computational beast. It was no longer just a translator or a writer; it became capable of logical reasoning and coding in dozens of programming languages. The GPT-3.5 version served as the engine for the public launch of ChatGPT in November 2022, causing a global sensation.

Maturity (GPT-4 and Beyond)

Released in 2023, GPT-4 is multimodal. It can analyze images, solve complex mathematical problems, and display much higher levels of creativity. Its accuracy was refined to reduce “hallucinations” (moments where the AI confidently invents facts).

Related : Unlimited and free ChatGPT-5 on Free AI Online

III. Internal Workings: A Probability Machine

Contrary to popular belief, GPT does not have “thoughts” or opinions. Its functioning is purely statistical, but on such a vast scale that it simulates intelligence.

When a user types a query, the model transforms each word into a sequence of numbers called embeddings (or vectorization). These numbers allow words to be placed in a mathematical space with thousands of dimensions. In this space, the word “King” is mathematically close to “Queen” or “Crown.”

The response process is a series of predictions:

- The model analyzes the prompt.

- It calculates which word (or “token“) has the highest probability of starting the response.

- Once that word is chosen, it feeds it back into its analysis to predict the next word.

- It repeats this process until it reaches a termination point.

What makes the result impressive is that GPT doesn’t always choose the most likely word (which would make it repetitive and boring), but uses a “temperature” setting (controlled randomness) to vary its style and creativity.

IV. The crucial step: Human alignment (RLHF)

If you simply give a machine the entire internet to read, it risks becoming aggressive or providing dangerous advice, as the internet contains both the best and worst of humanity.

To make GPT “personable,” OpenAI uses Reinforcement Learning from Human Feedback (RLHF). Thousands of human testers interacted with early versions of the model, rating the responses: “This is a good answer,” “This is rude,” “This is false.”

Through this process, the AI learned to adopt the posture of a helpful, neutral, and ethically-bound assistant. This final layer transforms raw technology into a mass-market product.

V. Challenges and limitations

Despite its power, GPT remains a tool with blind spots:

- Hallucination: Because it seeks to be statistically credible, it may invent a biography or a legal source that doesn’t exist, simply because the words “sound” right together.

- Lack of consciousness: GPT feels nothing. It has no understanding of the physical world. If it explains how to cook an omelet, it doesn’t know what heat or the smell of an egg is; it only knows the linguistic relationship between those concepts.

- Environmental cost: Training and running these models requires significant electricity and water (to cool servers).

Conclusion

GPT is far more than just word-processing software. It is a cognitive infrastructure. Just as electricity allowed for the mechanization of physical labor, GPT is beginning to automate certain forms of intellectual labor.

By understanding that GPT stands for Generative (creation), Pre-trained (massive knowledge), and Transformer (contextual understanding), we can better appreciate why this technology marks a turning point. We are only at the beginning of exploring what these increasingly lightweight and intelligent models will bring to science, education, and artistic creation.