“A picture is worth a thousand words.” For humans, analyzing our visual environment is an innate act that requires no conscious effort.

In a fraction of a second, our brains identify shapes, assess distances, detect motion, and anticipate trajectories. For a machine, however, reality is entirely different: an image or a video is nothing more than a massive grid of numbers—a matrix of colored pixels with no inherent meaning.

Enabling computers to go beyond this purely numerical stage to “see,” interpret, and understand the physical world is the core objective of Computer Vision. Today, this major branch of Artificial Intelligence (AI) is stepping out of the research labs to deeply transform our daily lives, from medicine to the automotive industry.

1. Definition: The eyes of Artificial Intelligence

Computer Vision is an interdisciplinary field of artificial intelligence and computer science that enables digital systems to acquire, process, analyze, and understand visual data in order to make decisions or take automated actions.

If general AI simulates the thinking capacity of the human brain, Computer Vision replicates the visual cortex. The ultimate goal is not just to capture images (which cameras have done exceptionally well for decades), but to truly give them meaning.

For a long time, computer vision relied on strict geometric and mathematical rules dictated by engineers. However, the discipline experienced a massive leap forward with the advent of Deep Learning (deep neural networks) and the abundance of digital data (Big Data). Today, machines learn on their own to recognize objects by examining millions of examples.

2. How does computer vision work?

For an algorithm to be able to identify a visual element, such as distinguishing a pedestrian from a light pole on a sidewalk—it must follow a complex process broken down into several key stages.

Data acquisition

It all begins with capturing reality. The system uses physical sensors: high-definition cameras, thermal cameras, medical scanners, or LiDAR sensors (which measure distances using lasers). These devices transform light and space into digital signals.

Image preprocessing

Before analyzing the content, the machine often needs to “clean” the image to optimize the work of the algorithms. This involves reducing noise (image grain), readjusting contrasts, resizing, or converting the image to grayscale if color is not a crucial piece of information for the task at hand.

Feature extraction

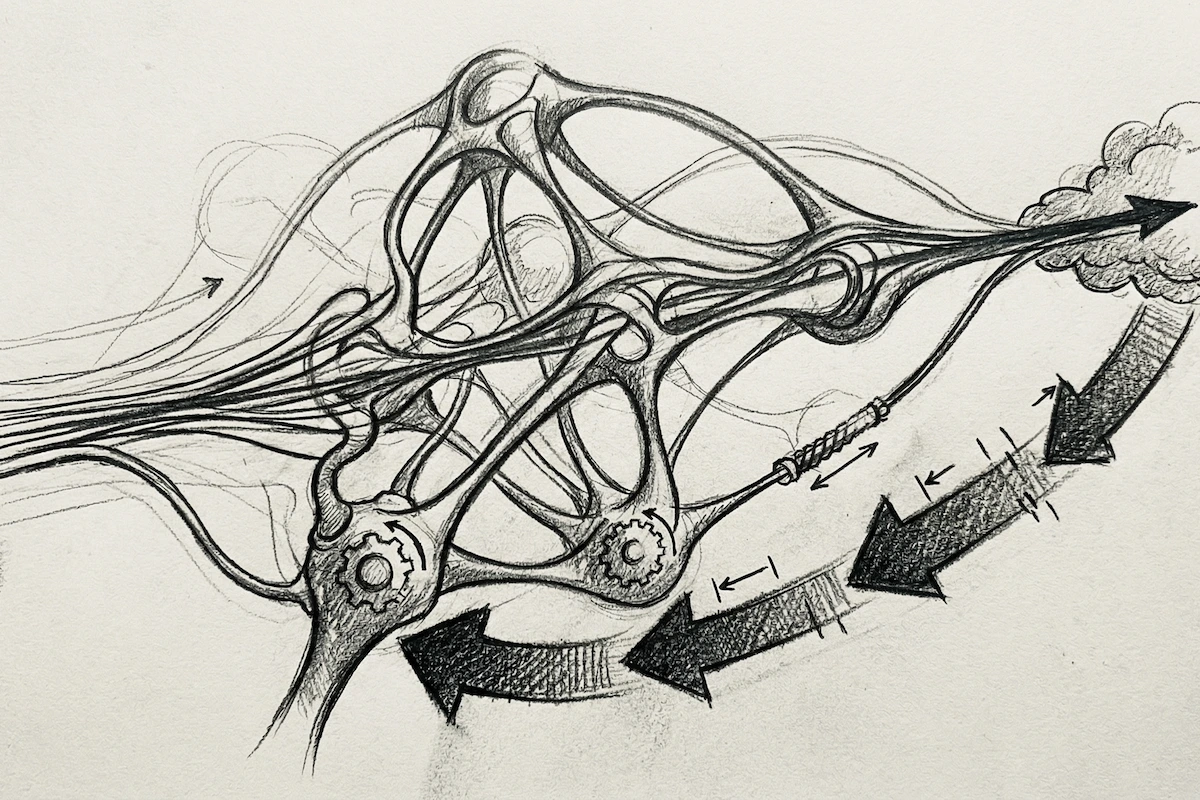

This is where artificial neural networks come into play, specifically CNNs (Convolutional Neural Networks). The algorithm scans the image at multiple levels of abstraction:

- The first layers of the network spot the simplest elements: horizontal lines, angles, and color contrasts.

- The intermediate layers assemble these lines to identify geometric shapes, textures, or patterns (such as circles or skin textures).

- The deep layers combine these shapes to recognize complex structures (an eye, a wheel, an airplane wing).

Classification and decision making

Once the features are extracted, the model compares this information with the knowledge acquired during its training phase. It then assigns a label to the image along with a statistical confidence score. The system can then trigger an action, such as emergency braking if the object identified in its path is a human being.

Related: Which jobs will AI replace? Top 10 professions at risk

3. Core core capabilities of the technology

Computer vision is not limited to a single type of exercise. Depending on the developers’ needs, it revolves around several fundamental tasks.

Image classification

This is the simplest form of Computer Vision. It consists of assigning a general category to an entire image. The algorithm answers the question: “What is this image as a whole?”. This is the technology that automatically sorts your vacation photos on your smartphone, creating folders like “Beach,” “Mountain,” or “Pets.”

Object detection

More complex than classification, object detection aims to precisely locate one or more specific elements within a scene by surrounding them with a bounding box. Here, the AI answers the question: “What objects are present, and where are they?”. This is an essential technological building block for surveillance and traffic management.

Image segmentation

Segmentation goes even further in terms of precision. Instead of surrounding an object with a loose square box, it analyzes the image pixel by pixel to isolate its exact contours. We distinguish between semantic segmentation (which colors all cars in a street the same way) and instance segmentation (which distinguishes each individual car). This process allows you to blur or change your background during a video conference on Teams or Zoom.

Facial recognition and analysis

This technology maps the key points of a human face (distance between the eyes, jaw shape, nose height) to create a unique biometric print. It is used to authenticate identity (like Apple’s FaceID) but can also be configured to analyze facial expressions to decode emotions such as joy, surprise, or fatigue.

4. Real-World applications transforming industries

Far from being a theoretical concept, Computer Vision is already deeply embedded in the global economy and is redefining the standards of many sectors.

Healthcare and medicine

Computer vision has become an indispensable assistant for radiologists and oncologists. By analyzing MRIs, X-rays, or mammograms, AI models trained on millions of clinical cases can detect micro-tumors, subtle fractures, or early cardiovascular anomalies. This leads to faster diagnoses and reduces the risk of human error.

Transportation and autonomous vehicles

The promise of self-driving cars relies almost entirely on Computer Vision. To navigate without a driver, the vehicle must continuously analyze the live video stream from its onboard cameras in real time. The artificial intelligence must instantly interpret road signs, anticipate cyclist behavior, read lane markings, and adapt to weather conditions like rain or fog.

Manufacturing and quality control

In manufacturing plants (automotive, electronics, food processing), computer vision is replacing manual visual inspections, which are often tiring and prone to human oversight. High-speed cameras examine every product leaving the assembly line to track down the slightest manufacturing defect, microscopic scratch, or misaligned component, ensuring a consistent level of quality.

Retail and commerce

The technology is also personalizing the shopping experience. Autonomous store concepts use a network of cameras and weight sensors to track customer movements. The system knows exactly which product has been picked up or put back on the shelves, allowing customers to walk out of the store without passing through a traditional checkout lane; billing happens automatically through their digital account.

5. Limitations and ethical challenges of the discipline

Despite performance that is often superior to humans on highly targeted tasks, Computer Vision still suffers from structural limitations.

The main weakness lies in its lack of flexibility and “common sense.” An AI can be misled by subtle variations in lighting, deceptive reflections, or cases of occlusion (an object 80% hidden behind another). Furthermore, so-called “adversarial attacks”, which involve imperceptibly modifying a few pixels of an image in a way that is invisible to the human eye, can completely skew a neural network’s predictions. For example, this could cause an AI to mistake a “Stop” sign for a speed limit sign.

On a societal level, the rise of facial recognition raises profound ethical debates regarding privacy, mass surveillance, and individual freedoms. Algorithmic bias is another major point of concern: if a model is trained on a poorly diversified dataset, its performance will be biased. This poses serious questions of discrimination, particularly in law enforcement or hiring applications.

Conclusion

Computer Vision represents one of the most fascinating advances of the digital era. By successfully endowing machines with a sharp visual sense, humanity has paved the way for unprecedented levels of complex automation.

As algorithms mature and edge closer to true contextual understanding, the boundary between human perception and artificial perception will continue to blur, redefining our relationship with technology.